The first six articles in this series addressed the structural foundations: why patchwork modernization fails, why ontology matters, how to modularize, when to replace, and how to execute incrementally. All of those strategies converge on a single enabling capability. APIs. Without disciplined APIs, none of the intelligence works.

APIs have mattered for a long time. Banks have been building them for mobile apps, partner integrations, and digital channels for over a decade. But the stakes have fundamentally changed. When APIs served human-facing applications, undisciplined API design was a technical inconvenience — slow response times, inconsistent data formats, occasional breakage. When APIs serve AI agents and orchestration engines that make real-time decisions affecting customers, risk exposure, and regulatory compliance, undisciplined API design is an operational risk.

When APIs served human-facing applications, undisciplined API design was a technical inconvenience. When APIs serve AI agents that make real-time decisions affecting customers, risk exposure, and regulatory compliance, undisciplined API design is an operational risk.

The difference is not subtle. It is the difference between a system that inconveniences a developer and a system that produces wrong answers at machine speed.

How AI Agents Consume APIs

AI agents interact with APIs differently than human-driven applications. A mobile banking app calls a handful of APIs in predictable patterns — check balance, view transactions, initiate transfer. An AI orchestration engine calls dozens of APIs in dynamic patterns that change based on the decision being made. A fraud detection agent might query the customer profile API, the transaction history API, the account relationship API, and the device authentication API in rapid sequence, correlating the responses to determine whether a transaction is legitimate.

If any of those APIs returns inconsistent data formats, the correlation fails. If any of them has unpredictable latency, the decision window closes before the agent can act. If any of them changes its response schema without versioning, the agent’s logic breaks silently — it continues to operate, but its decisions are based on misinterpreted data.

This is why API discipline in the AI era is not a technology preference. It is an operational requirement.

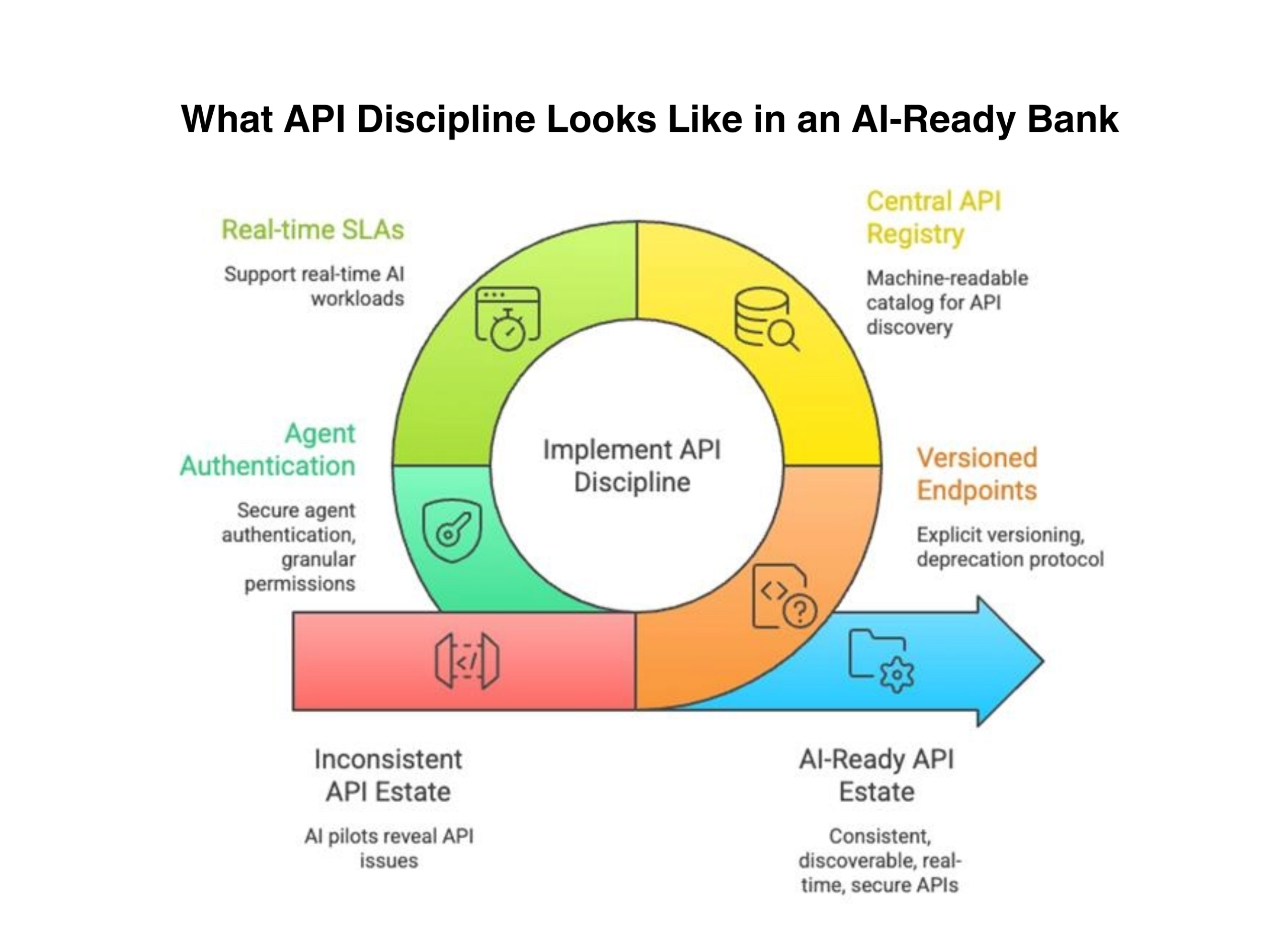

What API Discipline Looks Like in an AI-Ready Bank

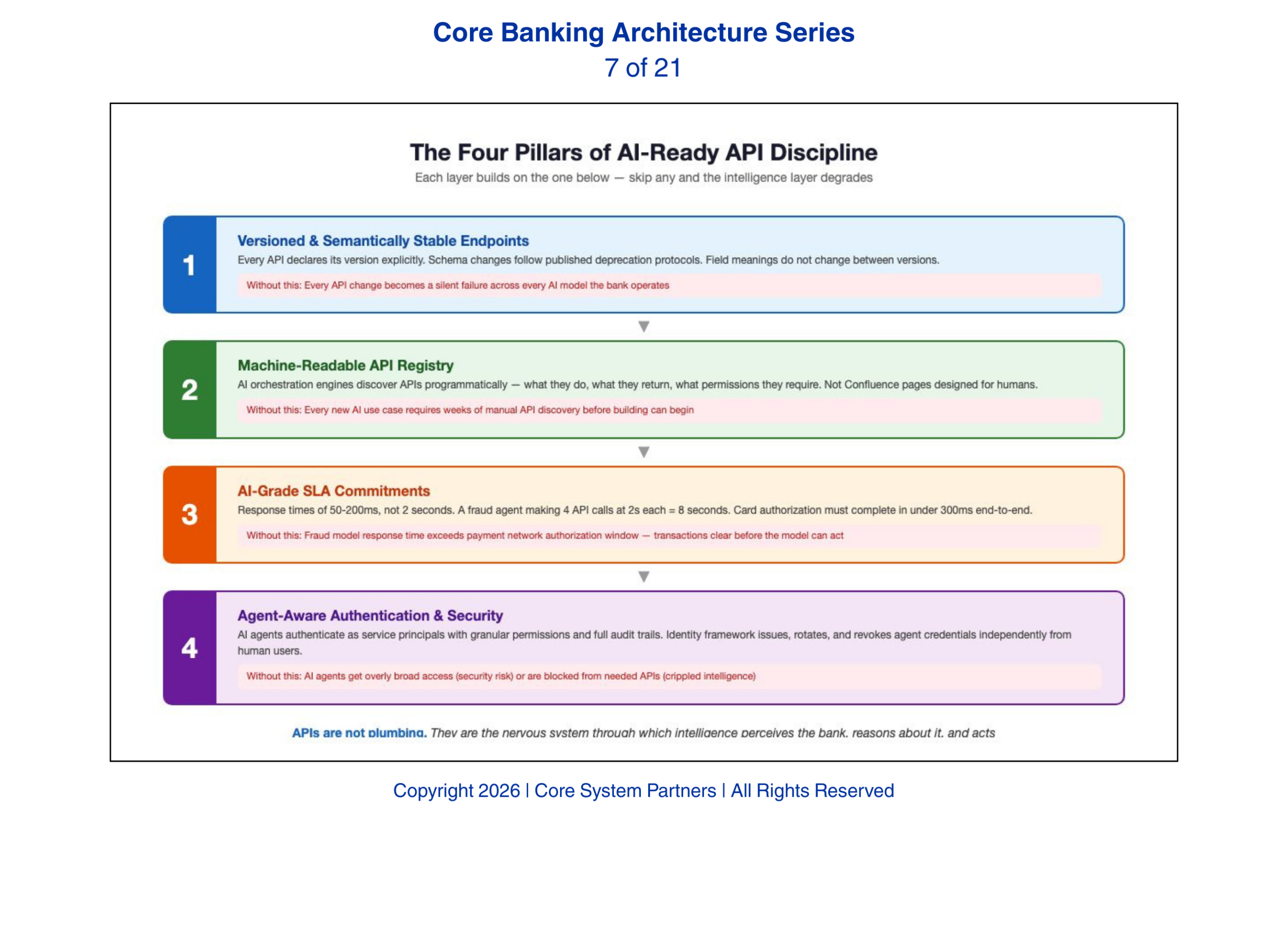

We have seen banks discover during AI pilots what their API estate actually looks like — and the discovery is rarely encouraging. Here are the four requirements, and what breaks when each one is missing.

Versioned and semantically stable endpoints are the foundation. Every API must declare its version explicitly, and changes to response schemas must follow a published deprecation protocol. AI agents are trained on specific API response structures. When those structures change without warning, the agent does not adapt — it degrades. Semantic stability means that the meaning of fields does not change between versions. An account balance field that returns available balance in version one and ledger balance in version two has broken every AI model that consumed version one. Without this discipline, every API change becomes a potential silent failure across every AI model the bank operates.

A central API registry that agents can discover programmatically is the second requirement. AI orchestration engines need to know what APIs exist, what they do, what data they return, and what permissions they require. In most banks today, this knowledge lives in developer documentation portals that are designed for humans. AI agents cannot browse a Confluence page. They need a machine-readable registry — an API catalog with standardized descriptions that an orchestration engine can query to determine which APIs to call for a given task. Banks without a registry find that every new AI use case requires manual API discovery — a developer spending weeks mapping available endpoints before the AI team can begin building.

SLA commitments that support real-time workloads are the third requirement. Most bank APIs were designed with human-acceptable response times — under two seconds is fine for a mobile app. AI agents operating in real-time decision loops need responses in the range of fifty to two hundred milliseconds. A fraud detection agent that waits two seconds for each of four API calls has consumed eight seconds — an eternity in transaction processing. For context, a card authorization decision must complete in under three hundred milliseconds end-to-end. API SLAs must be defined, monitored, and enforced at the latency levels AI workloads require. Banks that do not set and measure AI-grade SLAs discover the gap when their fraud model’s response time exceeds the payment network’s authorization window.

Security models that can authenticate agents, not just humans, are the fourth requirement — and the most architecturally novel. Traditional API security assumes a human user authenticating through an application. AI agents authenticate as service principals with their own identity, permissions, and audit trail. This requires an identity framework that can issue, rotate, and revoke agent credentials independently from human user credentials. It requires permission models granular enough to grant an AI agent read access to customer profiles but not write access to account balances. And it requires audit logging that captures not just which API was called, but which agent called it, what decision the agent was making, and what other APIs it called in the same decision sequence. Banks that have not built agent-aware authentication into their API security model will find themselves choosing between giving AI agents overly broad access — creating a security exposure — or blocking them from the APIs they need — crippling the intelligence layer.

AI-ready banks rely on disciplined APIs—real-time, secure, versioned and discoverable, to power intelligent operations.

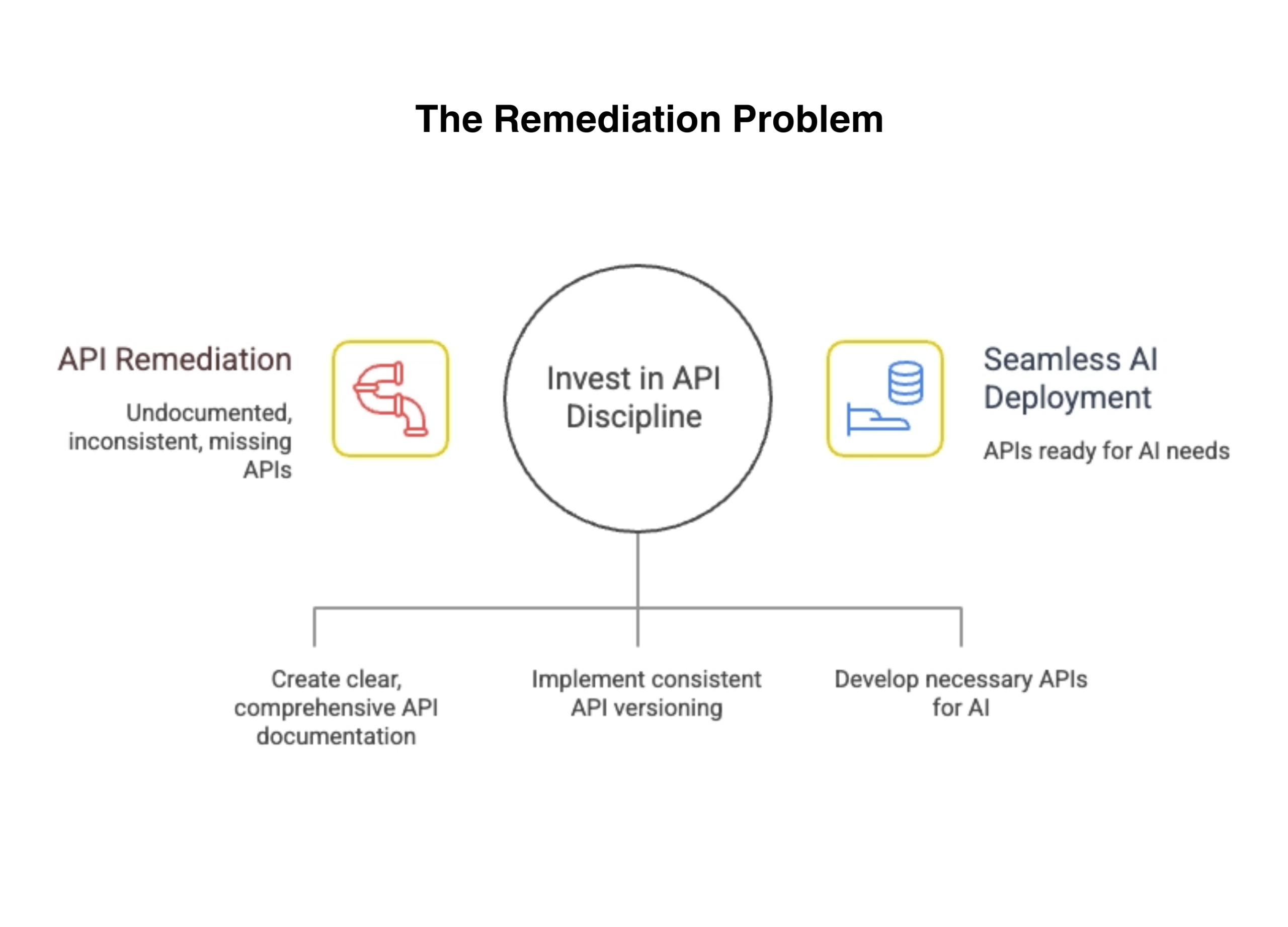

The Remediation Problem

Banks that have treated APIs as a technology layer rather than a strategic asset will find that every AI initiative begins with a remediation project. The pattern is predictable. A business unit sponsors an AI use case — next-best-action for commercial bankers, automated credit memo generation, intelligent document processing. The AI team builds the model. The model works in the lab. Then the team discovers that the APIs needed to feed the model in production are undocumented, inconsistently versioned, or simply do not exist.

The AI project becomes an API remediation project. The timeline doubles. The budget expands. The business sponsor loses confidence. The AI initiative joins the graveyard of proofs of concept that never reached production — not because the AI was wrong but because the plumbing could not support it.

Banks that invest in API discipline before launching AI initiatives avoid this trap. The APIs are ready when the AI needs them. The model moves from lab to production without a detour through infrastructure remediation. The time-to-value collapses from eighteen months to three.

Undisciplined APIs slow AI adoption, investing in API governance enables seamless, scalable AI deployment.

APIs as Strategic Infrastructure

The mental model shift is straightforward. APIs are not plumbing. They are the nervous system through which intelligence perceives the bank, reasons about it, and acts on it. Every API endpoint is a sensory input or an actuator for the AI layer. The quality of the intelligence is bounded by the quality of those endpoints.

Banks that understand this invest in APIs the way they invest in any strategic capability — with governance, standards, dedicated ownership, and continuous improvement. Banks that still treat APIs as a developer convenience will discover that their AI ambitions have a hard ceiling. And the ceiling is not the AI. It is the API layer they chose not to invest in.

What Comes Next

APIs let AI read state and trigger actions. But intelligence requires more than request-response interactions. AI systems need to observe the bank as it operates — to see events as they happen, detect patterns in sequences, and respond to state changes in real time. A fraud agent that can query an account balance through an API is useful. A fraud agent that can observe a sequence of payment events, authentication changes, and beneficiary additions as they happen — and detect the pattern before the wire clears — is transformative. The next article examines event-driven architecture and explains why events, not APIs alone, form the backbone of intelligent banking.

Where to Start

The gap between your current API estate and what AI workloads require is measurable. So is the cost of discovering that gap during your first production AI pilot rather than before it.

Core System Partners’ Transformation Readiness Scorecard includes an API maturity assessment that evaluates versioning discipline, registry completeness, SLA readiness, and agent authentication capability — giving leadership teams a clear view of their API gaps in under an hour.

If your API portfolio lacks versioning standards, a centralized registry, or consistent schema governance, the AI capabilities on your roadmap are building on a foundation that will break under production load.

If your institution is ready to move from awareness to action, visit coresystempartners.com/contact to start the conversation.

Next article in the series: Event-Driven Architecture: The Backbone of Intelligent Banking

Return to: Why AI makes modern core banking architecture non-negotiable

#CoreBankingTransformation #CoreBankingArchitecture