The previous article established APIs as the nervous system through which AI perceives and acts on the bank. APIs handle request-response interactions — the AI asks a question and gets an answer. But intelligence requires more than questions and answers. It requires observation. AI systems need to see events as they happen, detect patterns across sequences of events, and respond to state changes without being asked. That capability is event-driven architecture, and it is the backbone of intelligent banking.

Event-driven architecture was originally sold to banks as a way to achieve real-time processing. Faster fraud detection. Faster payments. Faster servicing. Those promises were accurate but incomplete. They described the operational benefit of events without explaining the architectural significance. And what banks typically got was narrow: a real-time feed for one use case, built by one team, consumed by one system, and disconnected from everything else. Real-time processing is a feature. Event-driven architecture is an infrastructure decision that determines whether AI can reason about your bank at all.

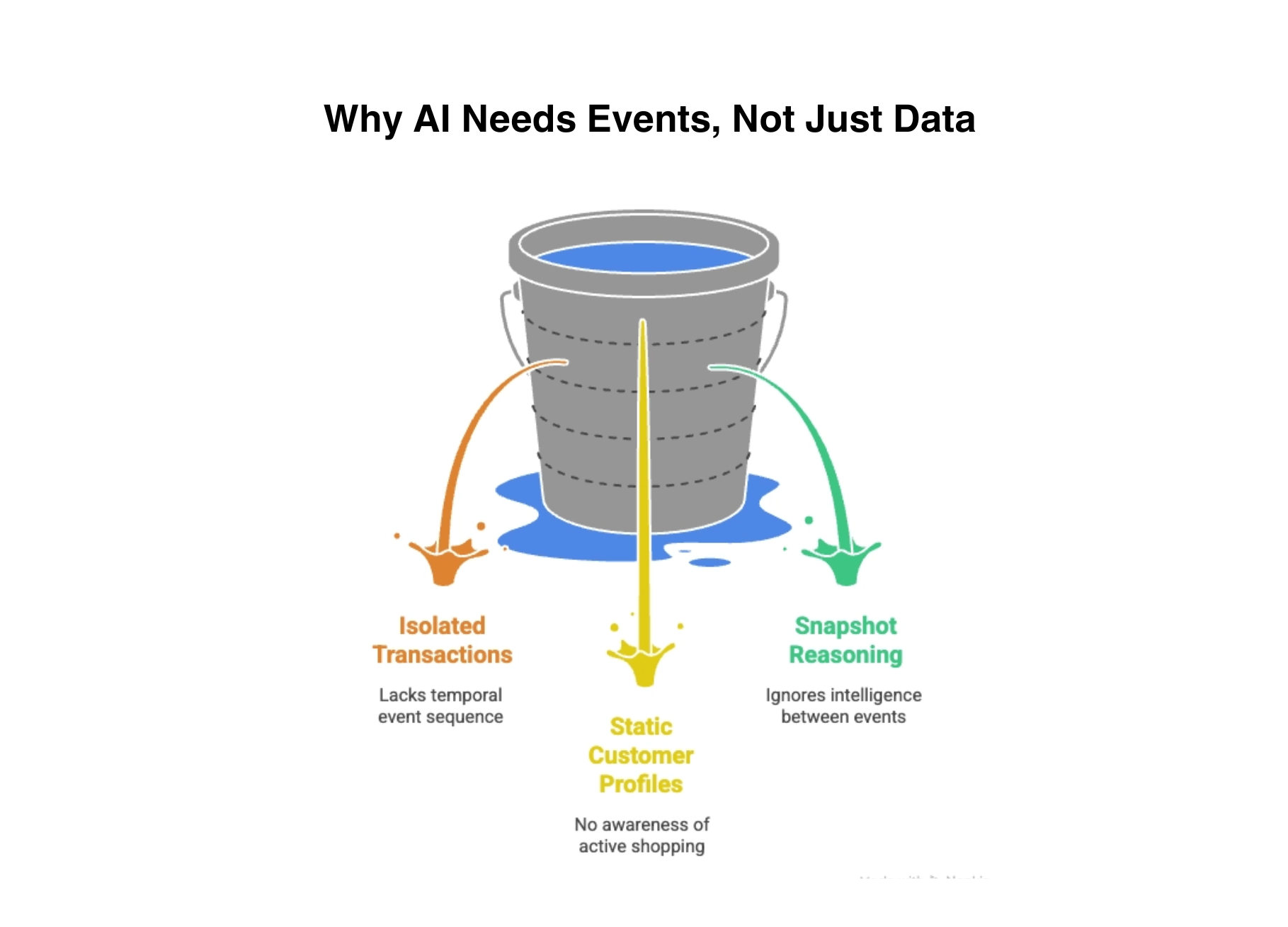

Why AI Needs Events, Not Just Data

AI systems do not simply consume data. They reason over sequences of events to understand context, detect patterns, and decide actions. This distinction is fundamental and most banks have not internalized it.

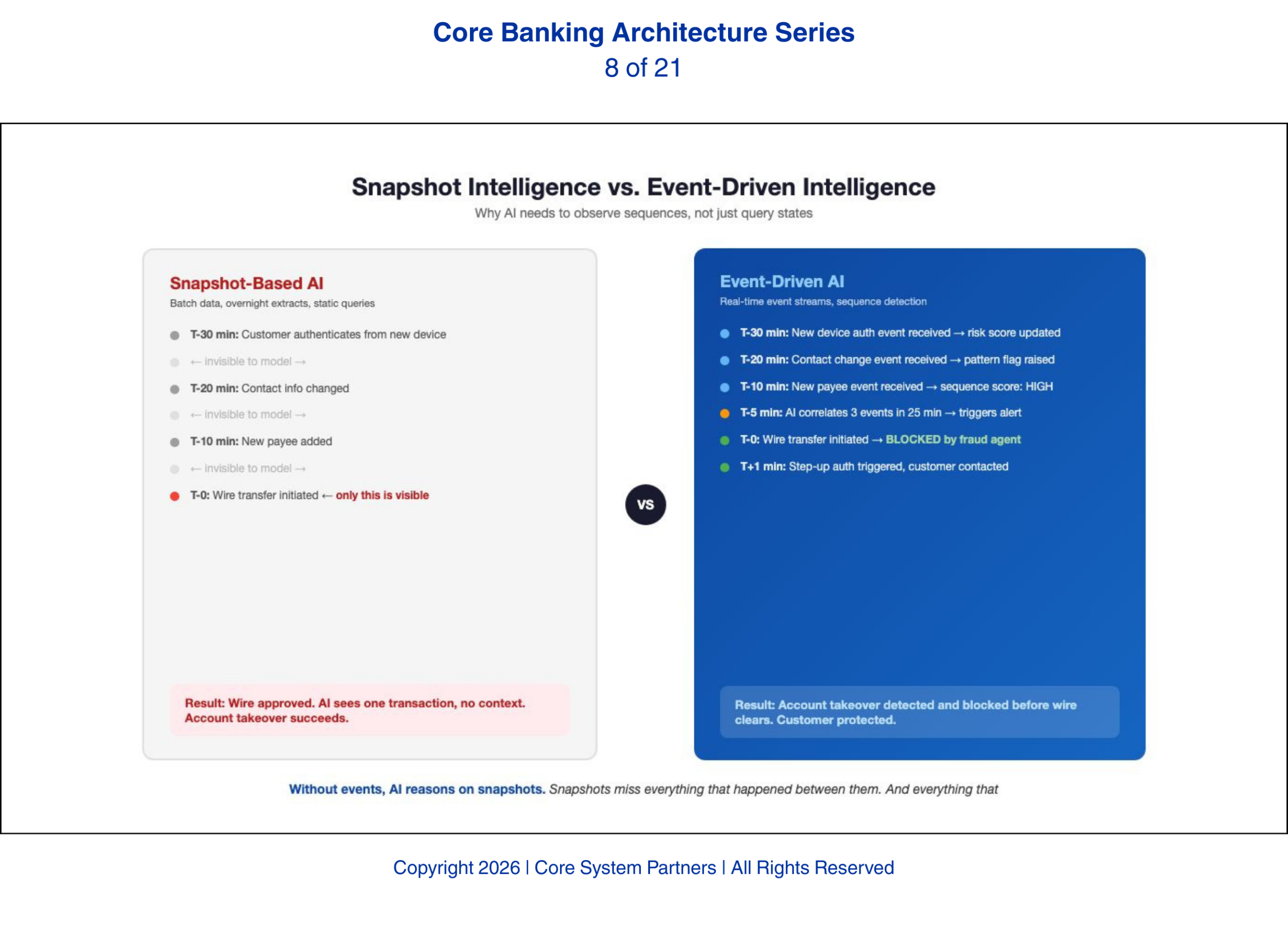

A fraud model does not look at a single transaction in isolation. It evaluates a sequence of events over time: the customer authenticated from a new device, changed their contact information, added a new payee, and initiated a wire transfer to that payee — all within thirty minutes. Each individual event is unremarkable. The sequence is a textbook fraud pattern. Without event visibility, the model sees only the wire transfer. It has no context. It cannot distinguish a legitimate payment from an account takeover because the preceding events were invisible to it.

A next-best-action engine reasons over a different kind of sequence: the customer visited the mortgage calculator three times this week, downloaded a rate sheet, and called the branch to ask about refinancing options. An engine with event visibility sees this pattern and surfaces a refinancing offer before the customer walks into a competitor. An engine without event visibility sees a static customer profile and has no idea the customer is actively shopping.

Without events, AI reasons on snapshots. Snapshots miss everything that happened between them. And everything that happened between them is where the intelligence lives.

Without events, AI reasons on snapshots. Snapshots miss everything that happened between them. And everything that happened between them is where the intelligence lives.

Without event-driven architecture, AI sees fragments, not the full story behind customer behavior and risk.

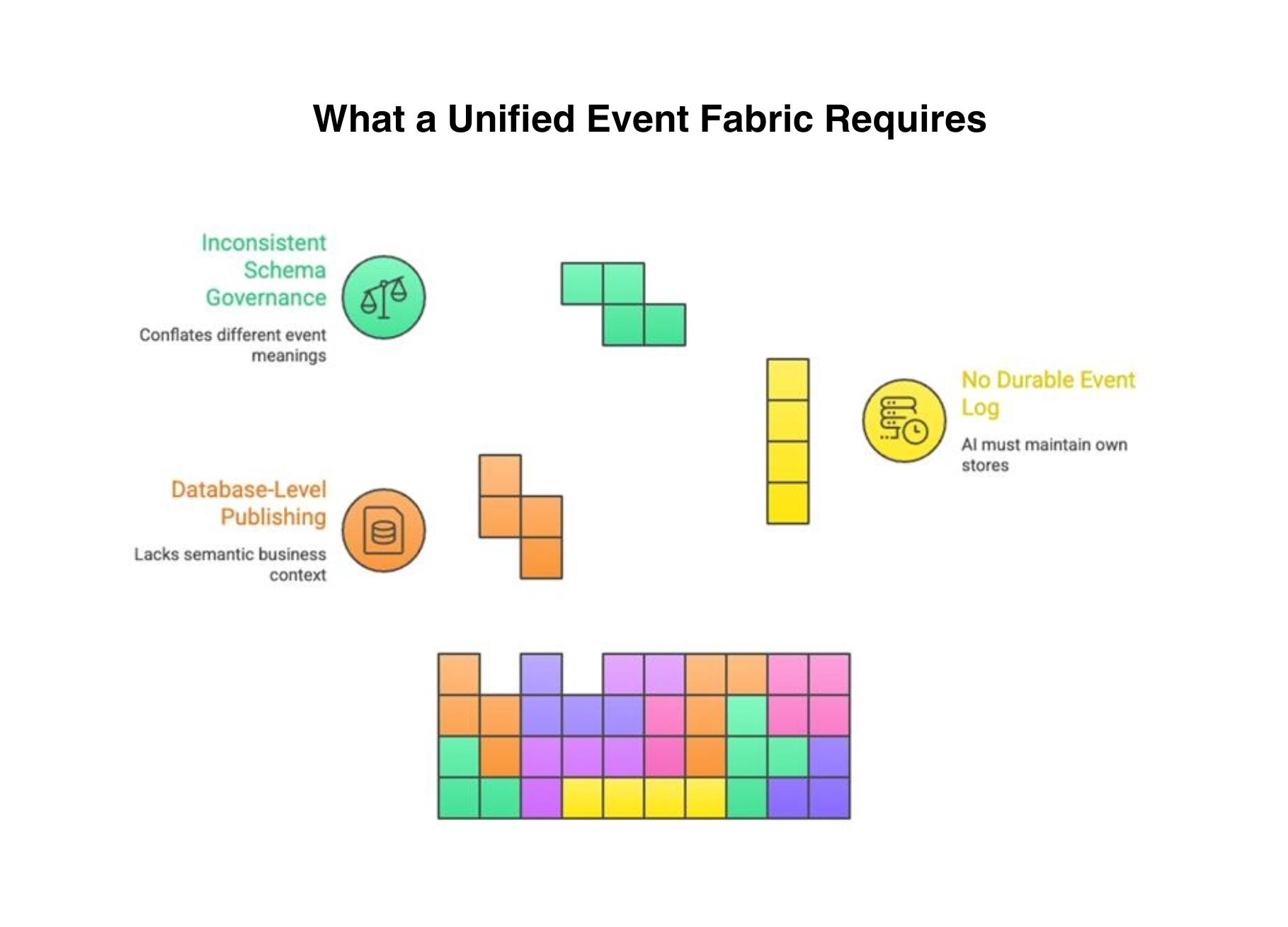

What a Unified Event Fabric Requires

Most banks that have implemented event-driven capabilities have done so in silos. The payments team publishes payment events. The fraud team consumes transaction events. The digital team publishes session events. But these event streams do not share a common fabric. They use different technologies, different schemas, different retention policies, and different access models. An AI agent that needs to correlate payment events with digital session events and customer profile changes cannot do so because the events live in three separate, incompatible streams.

A unified event fabric solves this. It is the enterprise-wide infrastructure through which all domains publish events and all consumers — including AI systems — subscribe to them. Building it requires three capabilities that most banks lack today.

First, events must be published at the domain boundary, not at the database level. A database change-data-capture stream tells you that a row was updated. A domain event tells you that a customer changed their address. The difference is meaning. AI systems need business events with semantic context, not database mutation logs. When the payments domain publishes a payment-initiated event, it includes the payment amount, the originator, the beneficiary, the channel, and the risk score — not a record of which database columns changed.

Second, the event fabric must provide a durable event log that AI can query historically. AI models need to reason over hours, days, and weeks of event history to detect patterns. An event stream that only provides real-time consumption without historical replay forces AI systems to maintain their own event stores — duplicating data, introducing inconsistency, and creating governance gaps. The event fabric itself must be the authoritative source of event history, queryable by time range, entity, and event type.

Third, event schema governance must ensure that what an event means is consistent across systems. This connects directly to the ontology discussion from earlier in this series — and here is where it becomes concrete. Consider what happens when the deposit system publishes an account-opened event and the lending system publishes an account-opened event, but they define “account” differently. The deposit system means a demand deposit account. The lending system means a credit facility. An AI model consuming both events to build a customer relationship profile will conflate deposits and loans — producing correlations that are technically precise and semantically wrong. We have seen this exact failure: an institution deployed a relationship-value AI model that overstated customer profitability by thirty percent because it was double-counting balances across event streams that used the same field name with different meanings. Event schema governance is not a nice-to-have. It is the difference between AI that reasons correctly and AI that reasons confidently on corrupted inputs.

AI needs more than real-time data, it needs structured, governed and durable events to operate effectively.

The Architectural Investment

Building a unified event fabric is not a small project, and banks that underestimate it pay the price in half-built implementations that serve one use case and cannot scale to the next.

The technology investment centers on an enterprise event streaming platform — Apache Kafka, Confluent, or equivalent — with the operational maturity to run it at banking-grade reliability. For a community bank, the platform investment is manageable: a managed Kafka service, a small operations team, and a phased rollout starting with the highest-value event domains. For a mid-tier regional institution, the investment is larger but the pattern is the same: start with payments and fraud events, prove the architecture, then extend domain by domain.

The organizational investment is harder. It requires defining which domains publish which events, in what schema, with what governance. This means assigning event ownership to domain teams, establishing a schema registry that enforces compatibility, and building review processes for new event types. Banks that skip this step end up with an event platform that carries data but not meaning — fast plumbing with no semantic governance.

The cultural investment is the hardest. Teams accustomed to request-response patterns must learn to think in events: systems announce what happened rather than waiting to be asked. This is a mental model shift that takes time and leadership commitment. But it is the shift that unlocks every real-time AI capability the bank will ever deploy.

Banks that make this investment create the foundation for every AI capability that depends on real-time awareness: fraud detection, behavioral analytics, next-best-action, operational monitoring, compliance surveillance, and agent orchestration. Banks that do not make this investment will find that every AI use case requires its own custom data pipeline — expensive, ungoverned, and impossible to scale.

What Comes Next

APIs and events provide the infrastructure for AI. But infrastructure alone does not guarantee success. The integration layer — the way APIs, events, and systems connect — is where most AI initiatives actually fail. Not because the model is wrong, but because the model cannot reach the data it needs, cannot trust the data it receives, or cannot act on its decisions without breaking something downstream. The next article examines the six specific integration mistakes that kill AI initiatives and explains what disciplined integration looks like when the stakes are real-time intelligence.

Where to Start

Most banks have some event capability — a real-time feed here, a CDC stream there. The question is whether those capabilities constitute a unified event fabric or a collection of disconnected streams that AI cannot reason across.

Core System Partners’ Transformation Readiness Scorecard evaluates event maturity across three dimensions: domain event coverage, schema governance, and historical queryability — giving leadership teams a clear view of whether their event infrastructure is AI-ready or merely real-time-capable.

If your core cannot publish a real-time event today, every AI use case on your roadmap is waiting for infrastructure that does not yet exist.

If your institution is ready to move from awareness to action, visit coresystempartners.com/contact to start the conversation.

Next article in the series: Integration Mistakes That Kill AI Initiatives

Return to: Why AI makes modern core banking architecture non-negotiable

#CoreBankingTransformation #CoreBankingArchitecture