The first nine articles in this series addressed infrastructure: architecture, data models, ontology, modularization, APIs, events, and integration discipline. Those foundations exist to enable AI. This article addresses what happens when AI is enabled but ungoverned — and why governance, done properly, is an architectural capability, not a paperwork exercise.

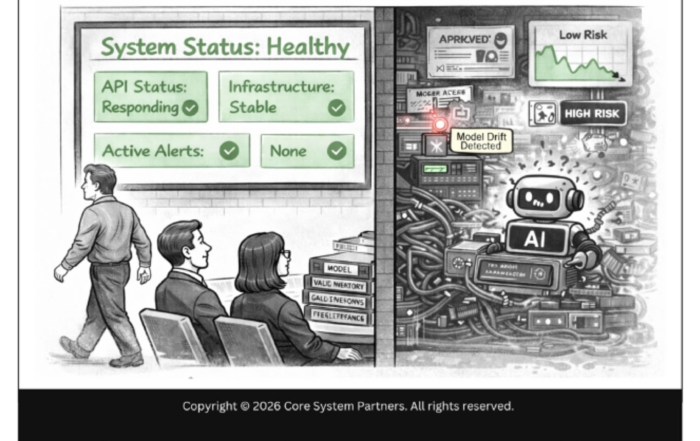

Banks are treating AI governance as a documentation problem. They are writing policies, filling out model inventory spreadsheets — typically Excel files with columns for model name, owner, validation date, and a RAG status that is green for everything because nobody has defined what red actually means. They are creating governance committees that meet quarterly and review slide decks. They are producing framework documents that describe what governance should look like without building the infrastructure to deliver it.

These are necessary activities. They are not sufficient. None of them matters if the architecture cannot actually enforce what the documents describe.

You cannot document your way to explainability if the infrastructure does not record decisions. You cannot achieve reproducibility if the environment a model ran in cannot be reconstructed. You cannot guarantee auditability if the audit trail is maintained at the application layer where it can be modified. Governance without architecture is theater. It satisfies the appearance of control without delivering actual control.

Governance without architecture is theater. It satisfies the appearance of control without delivering actual control.

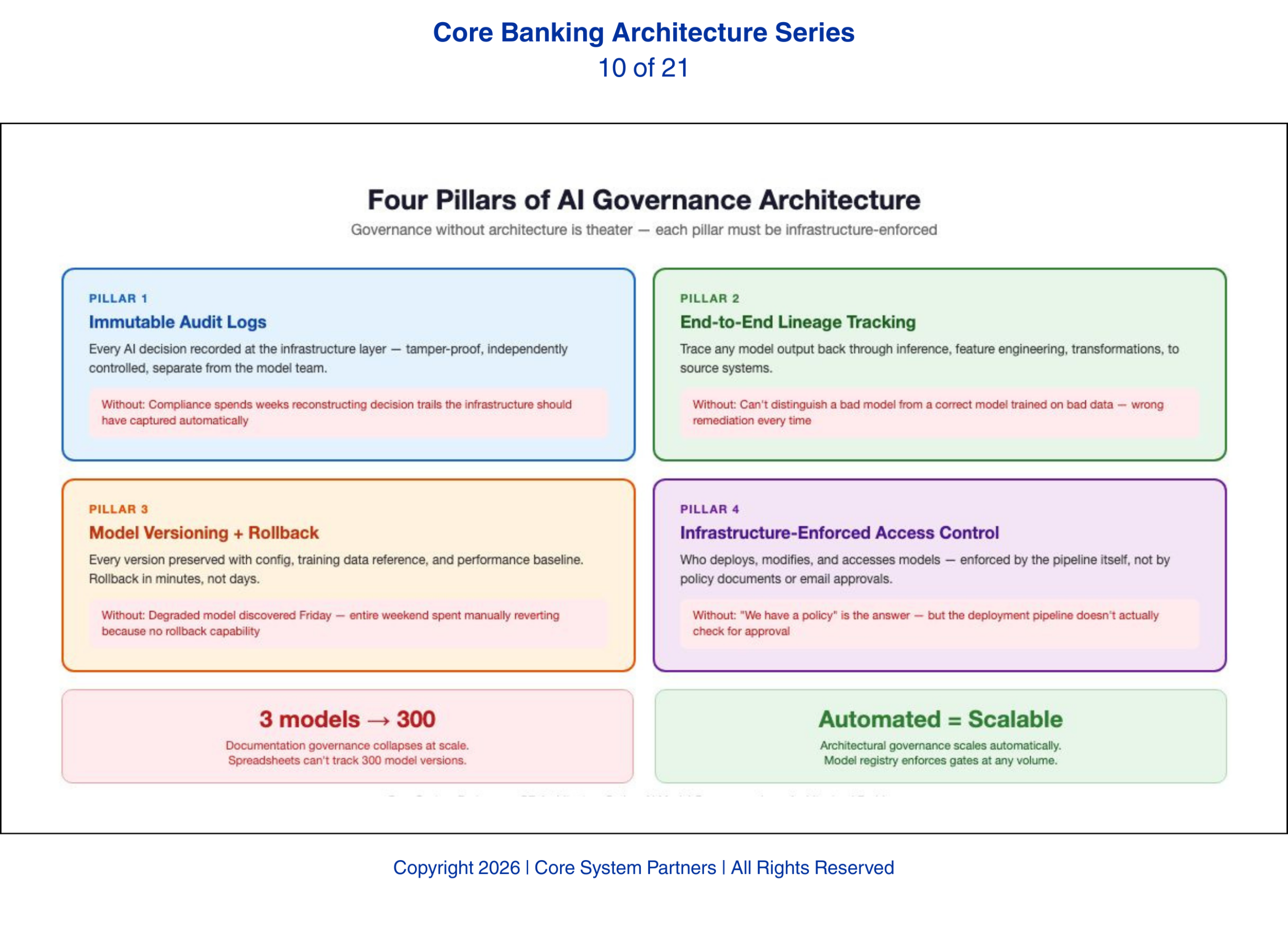

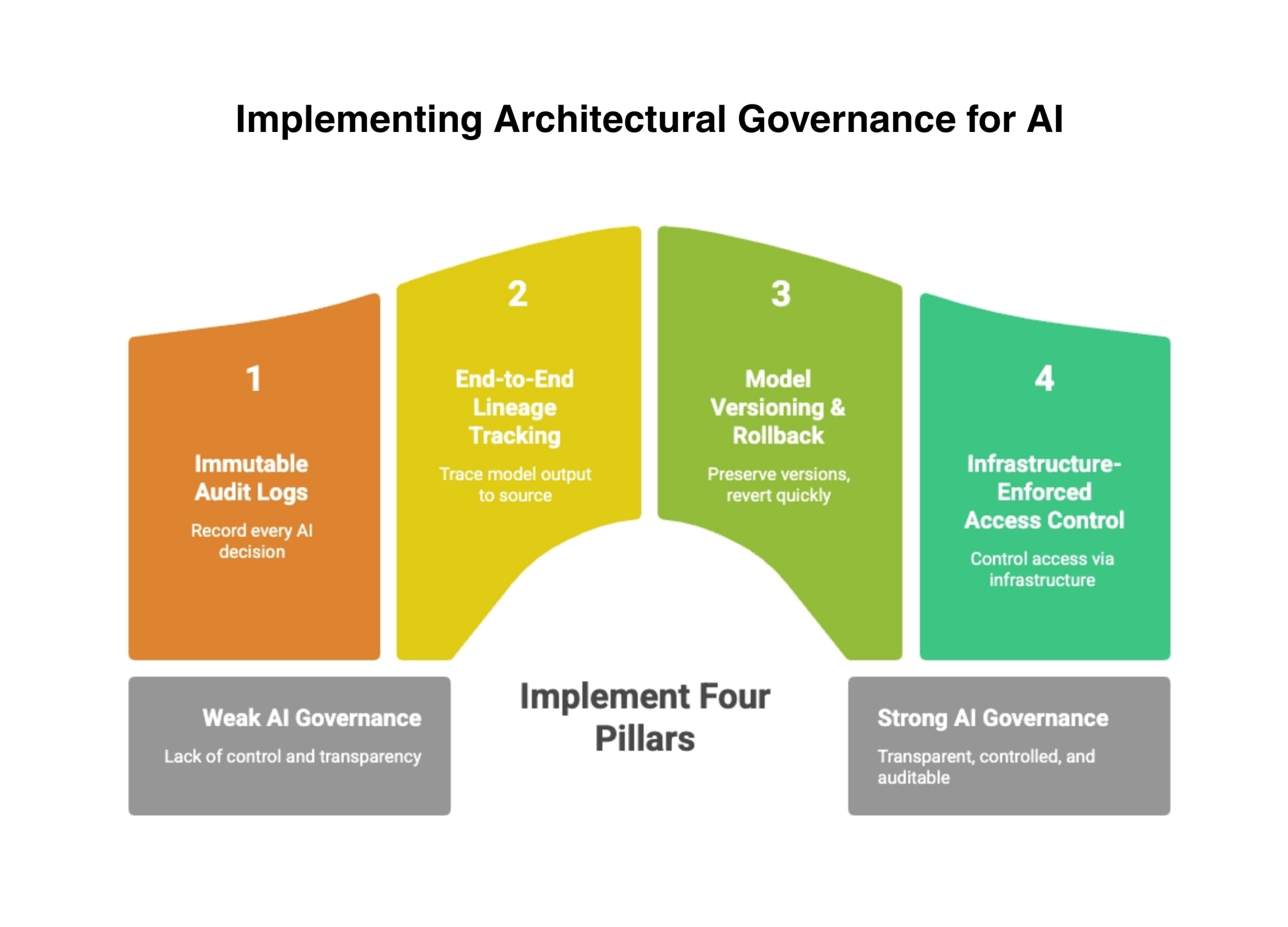

The Four Pillars of Architectural Governance

Immutable audit logs at the infrastructure layer form the first pillar. Every AI decision — every model invocation, every input consumed, every output produced, every action triggered — must be recorded in a log that cannot be modified after the fact. This log must live at the infrastructure layer, not the application layer. Application-layer logs can be altered by the same team that built the model. Infrastructure-layer logs are controlled by a separate function with independent access controls. When an examiner asks to see the decision trail for a specific customer outcome, the bank must be able to produce it from a tamper-proof source. Banks without immutable logging discover this gap during examinations, when the compliance team spends weeks manually reconstructing a decision trail that the infrastructure should have captured automatically.

End-to-end lineage tracking from training data to model output forms the second pillar. A model’s decisions are only as good as the data it was trained on and the data it consumes in production. Lineage tracking means the bank can trace any model output back through the inference pipeline, through the feature engineering layer, through the data transformations, back to the source systems that provided the raw data. When a model produces a questionable decision, lineage tracking answers the question: what data informed this decision, and where did that data come from? Without lineage, the bank cannot distinguish between a model that made a bad decision and a model that made a correct decision on bad data — and the remediation for each is entirely different.

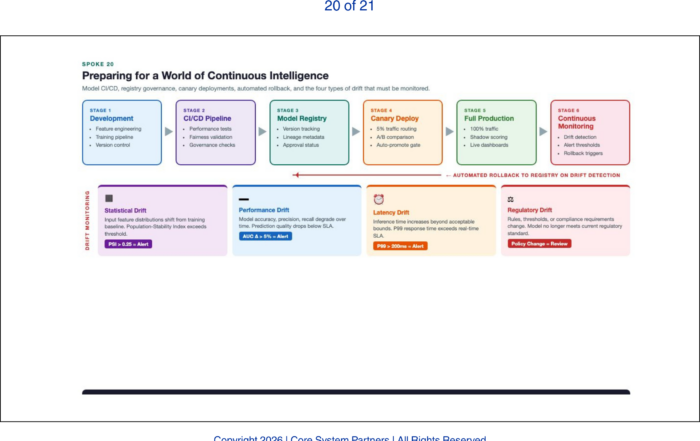

Model versioning with the ability to roll back forms the third pillar. AI models are not static. They are retrained, fine-tuned, and updated as data changes and business requirements evolve. Every version of every model must be preserved with its associated configuration, training data reference, and performance baseline. When a new model version underperforms or produces biased outcomes, the bank must be able to roll back to the previous version within minutes, not days. This requires infrastructure — a model registry, a deployment pipeline with rollback capability, and automated performance comparison between versions. We have seen banks discover a degraded model on a Friday afternoon and spend the entire weekend manually reverting because the deployment pipeline had no rollback capability. That is not a governance posture. That is a fire drill.

Access control that is infrastructure-enforced, not process-dependent, forms the fourth pillar. Who can deploy a model to production? Who can modify a model’s configuration? Who can access the training data? If your bank cannot answer these questions by pointing to infrastructure controls — if the answer is “we have a policy” or “the team knows the process” — your governance is process-dependent, not infrastructure-enforced. A governance framework that says only approved models can be deployed to production is meaningless if the deployment pipeline does not actually check for approval. These controls must be enforced by the infrastructure itself — through role-based access in the deployment pipeline, through authentication in the model registry, through encryption and access logging in the data platform.

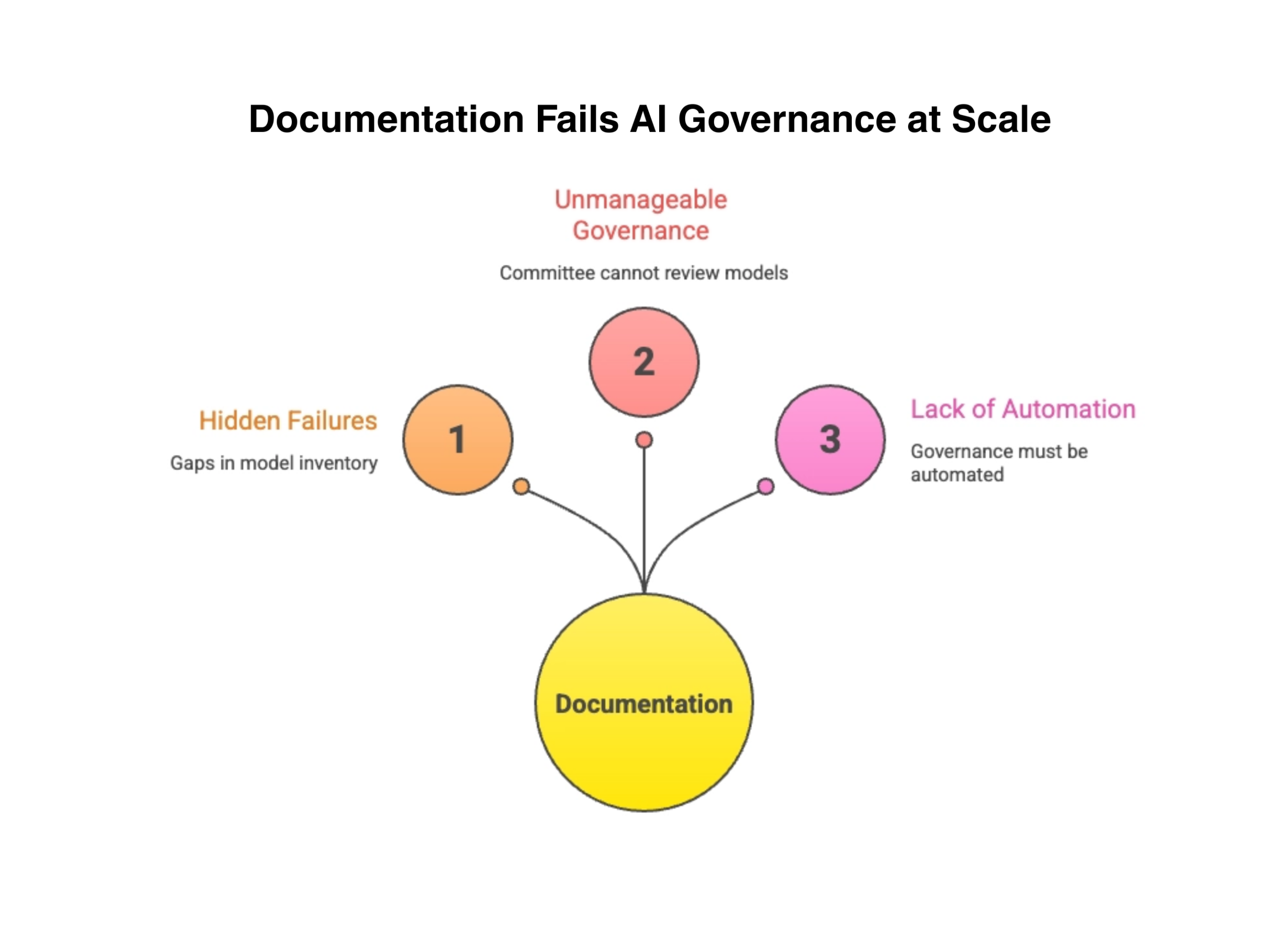

Why Documentation Alone Fails

The documentation approach to AI governance does not actually work at small scale either — it merely hides its failures. When a bank has three models in production, a governance committee can review each one manually. Model inventory spreadsheets stay current. Validation reports are produced on schedule. The process feels manageable. But even at three models, the documentation approach has a fundamental weakness: it depends on people remembering to update the documents, and people forget. The model that was retrained last month without updating the inventory. The validation that was deferred because the deadline slipped. The configuration change that was made directly in production because it was urgent. Small scale hides these gaps. It does not eliminate them.

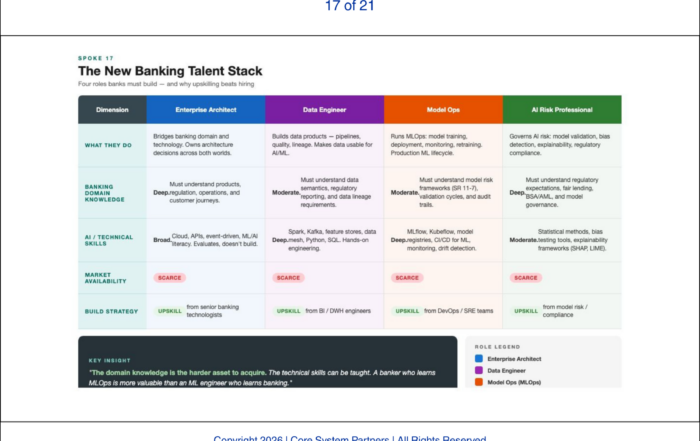

AI does not stay at small scale. Banks that are serious about AI will have dozens of models in production within two years and potentially hundreds within five. Models will be updated weekly. AI agents will chain multiple models in a single workflow. The governance committee that could review three models per quarter cannot review thirty. The spreadsheet that tracked three model versions cannot track three hundred.

At scale, governance must be automated. Automated governance requires architecture. The model registry must enforce validation gates. The deployment pipeline must verify approval status. The monitoring infrastructure must detect drift and trigger alerts. The audit system must capture decisions without human intervention. None of this is possible without deliberate architectural investment.

AI governance doesn’t fail from lack of documentation, it fails from lack of architecture.

Who Bears the Regulatory Risk

The banks that will face the most regulatory pressure are not the banks with the most sophisticated AI. They are the banks with sophisticated AI and no architectural governance foundation. Regulators understand that AI creates new risk. They will look first at the banks that are deploying AI aggressively and ask whether the governance infrastructure matches the deployment ambition.

A bank that deploys a single AI model with full architectural governance — immutable audit logs, lineage tracking, model versioning, and infrastructure-enforced access control — is in a stronger regulatory position than a bank that deploys twenty models with documentation-only governance. The examiner’s question is not how many models do you have. The question is can you prove that those models are governed. Architecture provides the proof. Documentation provides the narrative.

What Comes Next

Architectural governance provides the internal capability. But banks do not operate in a vacuum. Regulators are actively extending model risk management frameworks to cover agentic AI, real-time decisioning, and AI-orchestrated workflows. The next article examines the specific regulatory expectations that are emerging and explains how architecture — not compliance documentation — determines whether a bank can meet them.

Where to Start

The gap between documentation-based governance and architectural governance is the gap examiners will probe first. If your bank cannot produce an immutable audit trail for any AI decision on demand, the gap is already a risk.

Core System Partners’ Transformation Readiness Scorecard includes a governance architecture assessment that evaluates audit logging maturity, lineage tracking capability, model versioning infrastructure, and access control enforcement — giving leadership teams a clear view of whether their governance is built to scale.

If your model governance today depends on spreadsheets, email approvals, or quarterly reviews, you have documentation of compliance — not the infrastructure to sustain it at AI scale.

If your institution is ready to move from awareness to action, visit coresystempartners.com/contact to start the conversation.

Next article in the series: Regulatory Expectations for AI-Enabled Core Platforms

Return to: Why AI makes modern core banking architecture non-negotiable

#CoreBankingTransformation #CoreBankingArchitecture