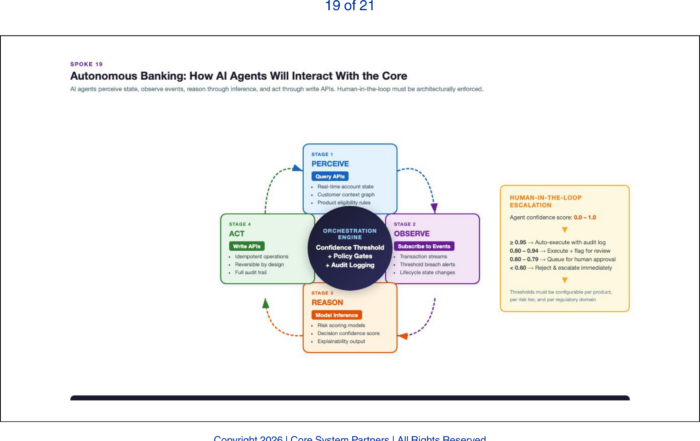

AI agents interact with core banking through APIs and events, guided by orchestration, governance and human-in-the-loop controls.

AI agents are already operating inside banks. They are handling customer onboarding workflows, flagging compliance exceptions, routing servicing requests, generating credit memos, and reconciling transactions. The agents are imperfect. They require supervision. They are deployed in constrained domains with human oversight. But they are operating on production systems, making decisions that affect real customers, in real time.

The question is no longer whether AI agents will operate inside banks. The question is whether your architecture can support them when they arrive.

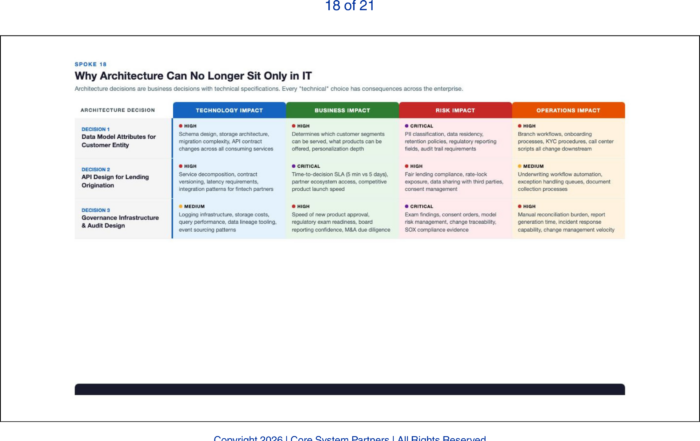

Every architectural capability discussed in this series — modular domains, governed APIs, unified event fabrics, embedded governance, resilient operations, disciplined vendor ecosystems, cross-functional ownership — exists to enable what this article describes: autonomous AI agents that interact directly with the core banking system, making decisions, executing workflows, and managing exceptions without waiting for a human to press a button.

What Agents Need from the Architecture

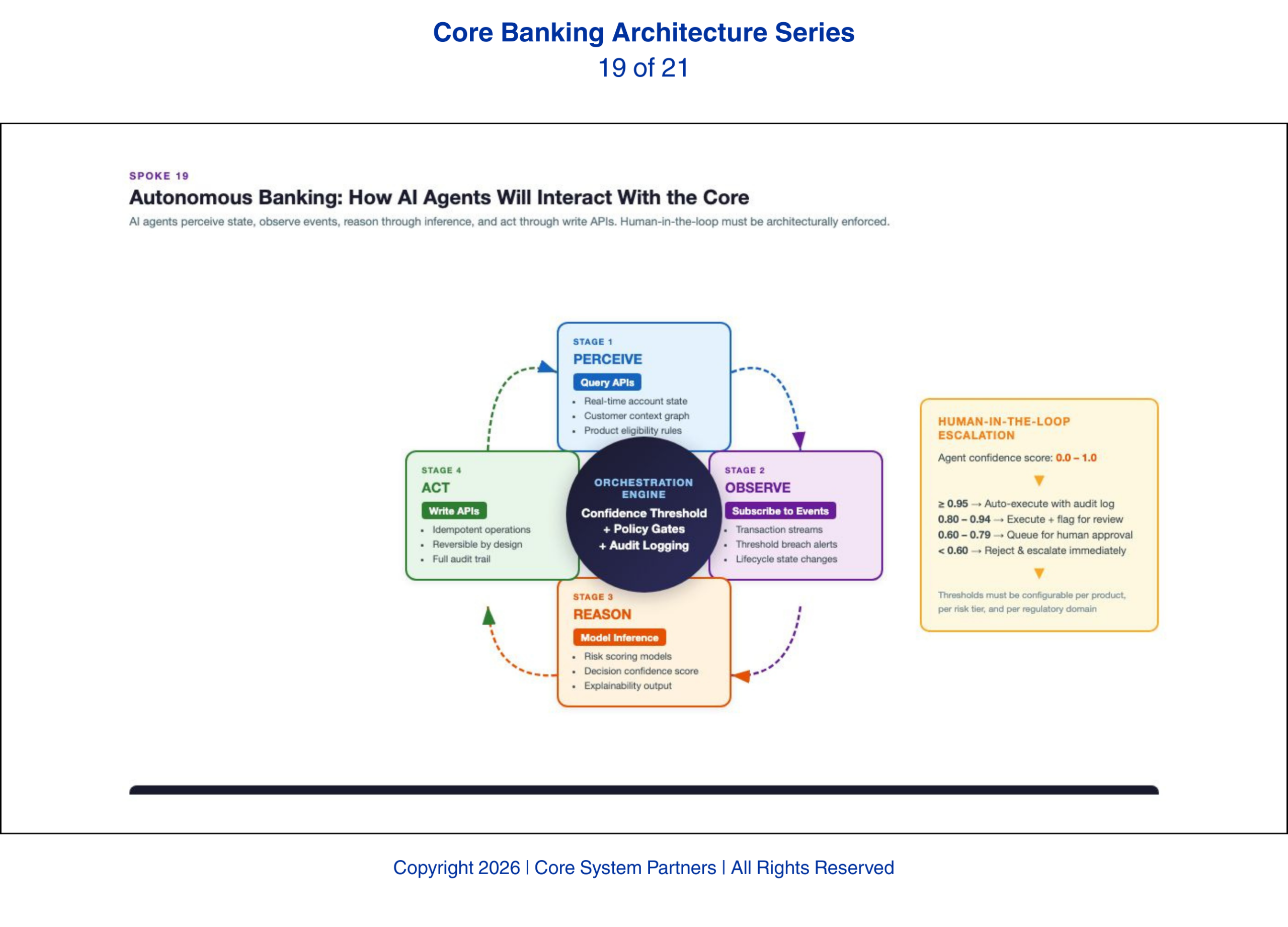

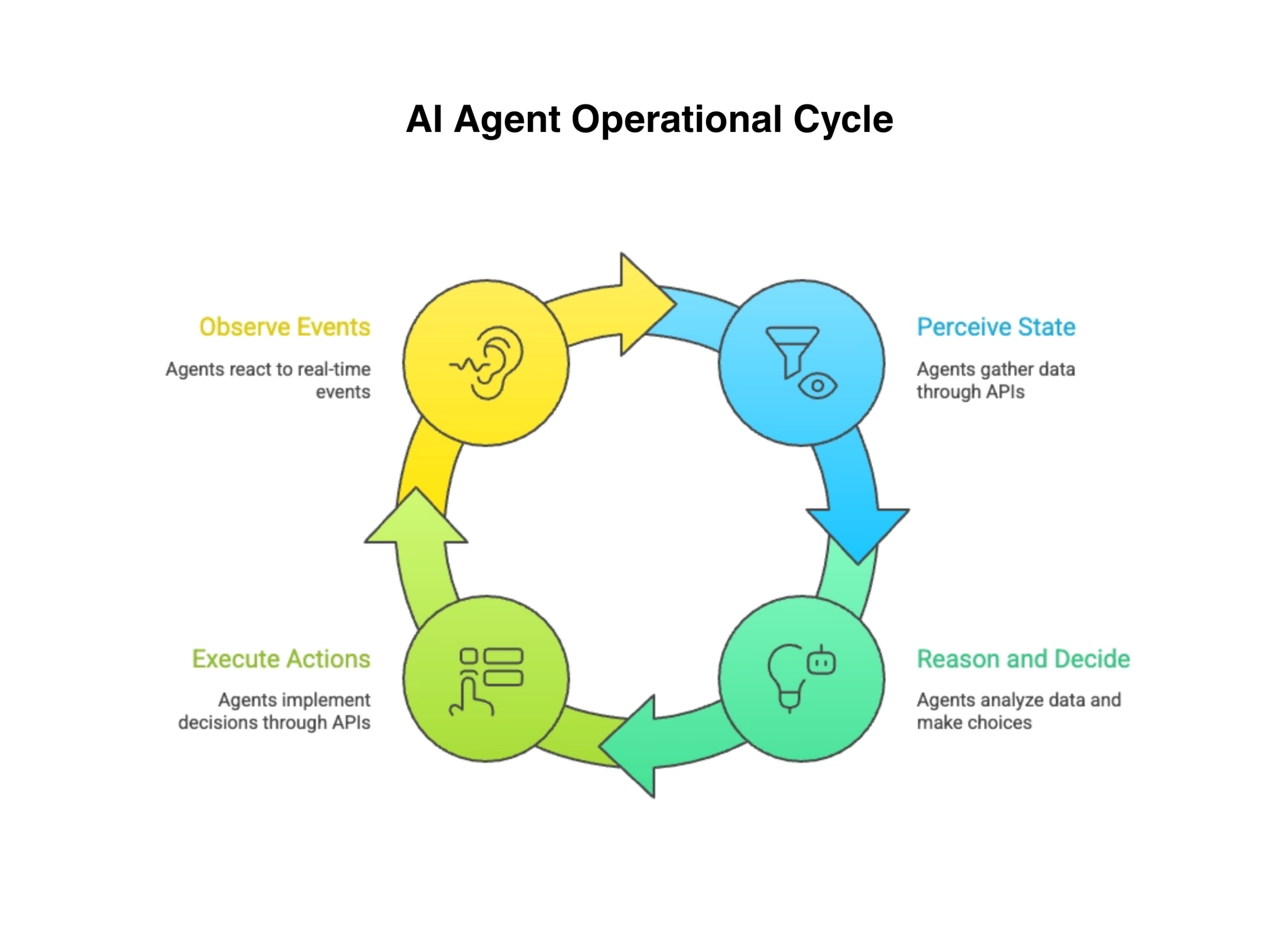

AI agents are not dashboards. They are not reports. They are not chatbots that generate text responses. Agents are autonomous systems that perceive the bank’s state through APIs and events, reason about what they observe, decide on actions, and execute those actions through the bank’s systems.

Perception requires APIs that agents can discover and call programmatically. The agent must be able to query the customer domain, the transaction domain, the lending domain, and the compliance domain through clean, well-documented APIs without custom integration for each connection. The API registry described in the earlier article on APIs becomes critical here — agents need to find the APIs they need without a developer manually configuring each connection.

Observation requires event streams that trigger agent workflows. A compliance monitoring agent does not poll the transaction system every five minutes looking for suspicious activity. It subscribes to the transaction event stream and responds to events as they occur. The unified event fabric described earlier becomes the agent’s primary sensory input — a continuous stream of everything happening across the bank’s domains.

Action requires the same APIs to support write operations — not just reads. An onboarding agent that decides a customer application is approved must be able to trigger account opening, card issuance, and online banking enrollment through the core’s APIs. If the APIs are read-only, the agent can observe but not act. It becomes an expensive alerting system rather than an autonomous operator.

AI agents operate in continuous loops, perceiving, deciding, acting, and learning from real-time events.

Human-in-the-Loop as an Architectural Pattern

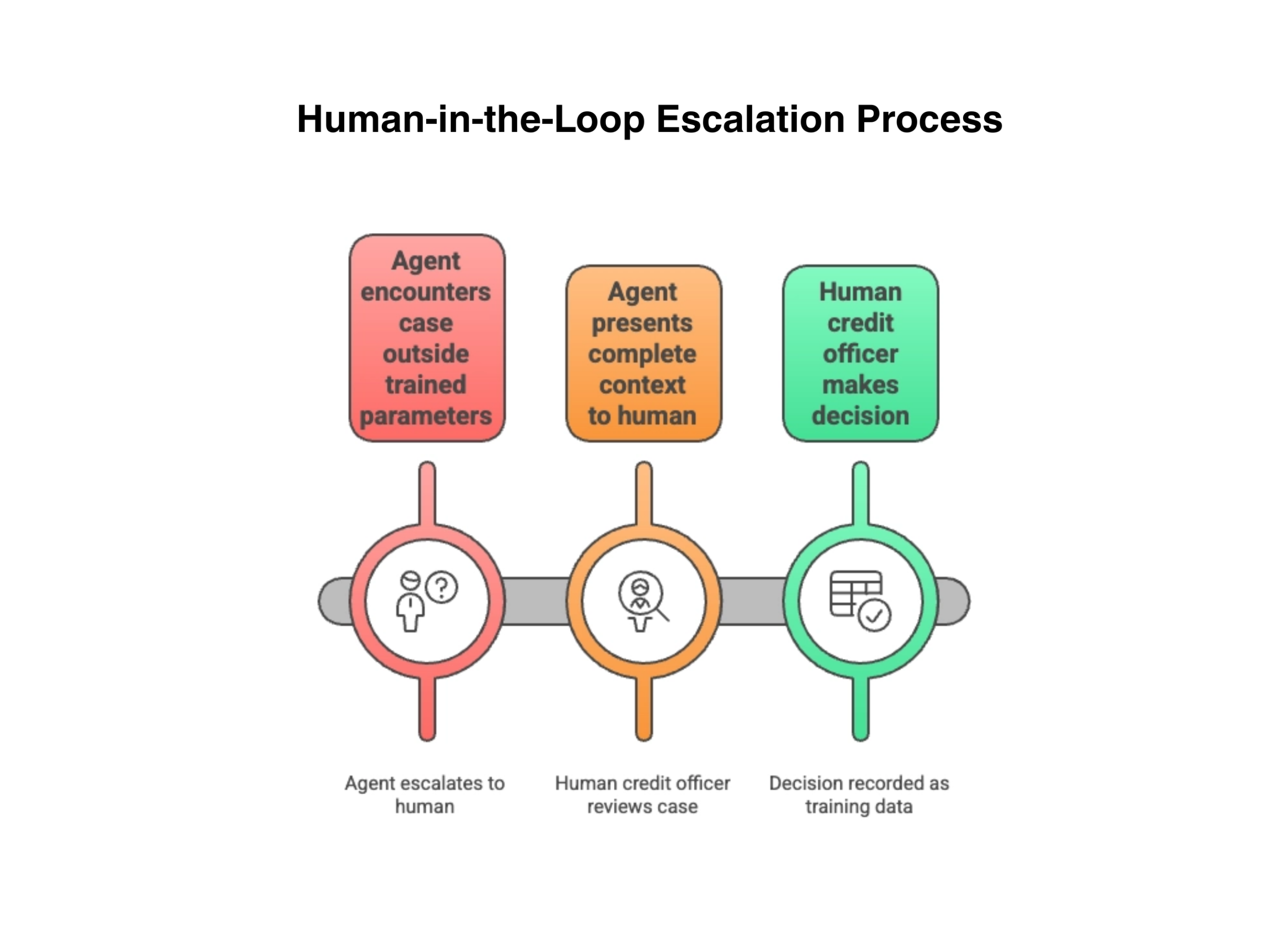

Autonomous does not mean unsupervised. Every agent deployment must include human-in-the-loop escalation paths that are architecturally enforced — not documented in a policy manual and forgotten. The architecture must define when an agent escalates to a human, how it presents the context for the escalation, and what happens to the workflow while waiting for human input.

A lending agent processing a credit application encounters a case that falls outside its trained parameters — an unusual collateral structure, a complex guarantor relationship, a borrower with conflicting signals in their financial history. The agent does not guess. It does not default to approval or denial. It escalates to a human credit officer with a complete package: the data it evaluated, the model’s confidence scores, the specific reason for escalation, and the options it considered. The credit officer makes the decision. The agent records the outcome as training data for future improvement.

This pattern must be enforced by the orchestration layer, not by the agent itself. An agent cannot be trusted to accurately assess its own limitations in every case. The orchestration engine applies confidence thresholds, domain-specific escalation rules, and regulatory requirements that determine when human involvement is mandatory regardless of the agent’s confidence level.

AI agents operate in continuous loops, perceiving, deciding, acting and learning from real-time events.

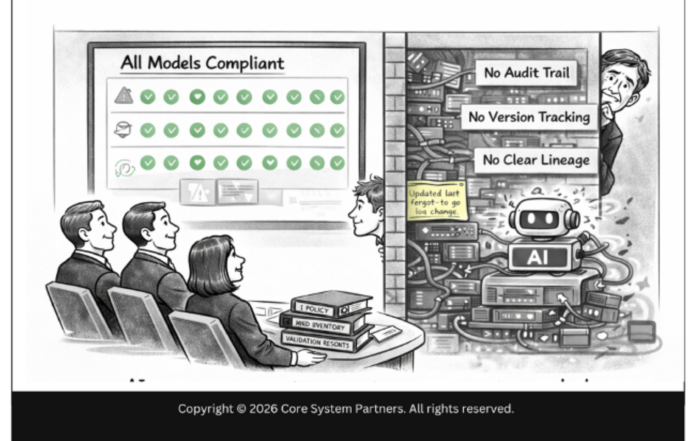

Audit Trails and Rollback

Every agent action must be recorded in an immutable audit trail. Not just the final decision — every step. What data the agent queried. What events it observed. What reasoning path it followed. What action it took. What downstream systems were affected. When an examiner asks why a particular customer received a particular outcome, the bank must be able to reconstruct the agent’s entire decision process from the audit trail.

This is not a theoretical regulatory requirement. SR 11-7 and OCC 2011-12 established model risk management expectations that apply directly to agent-based systems. When an agent chains multiple models into an autonomous workflow — a credit model feeding a pricing model feeding an approval decision — each model’s contribution must be individually traceable. Examiners will ask not just what decision was made but which model made which component of that decision, what data each model consumed, and whether the model versions in production at the time of the decision matched the versions that were validated. Banks that cannot answer these questions for agent workflows face the same examination consequences they would face for any unvalidated model — MRAs, MRIAs, and potential enforcement actions.

Rollback capability is equally critical. When an agent workflow fails midway through a multi-step process, the bank must be able to undo the completed steps without corrupting state. A customer who has been partially onboarded — account opened but compliance verification incomplete — represents a regulatory exposure. The rollback must be automated, not dependent on a developer manually reversing database entries.

The Architecture Dividend

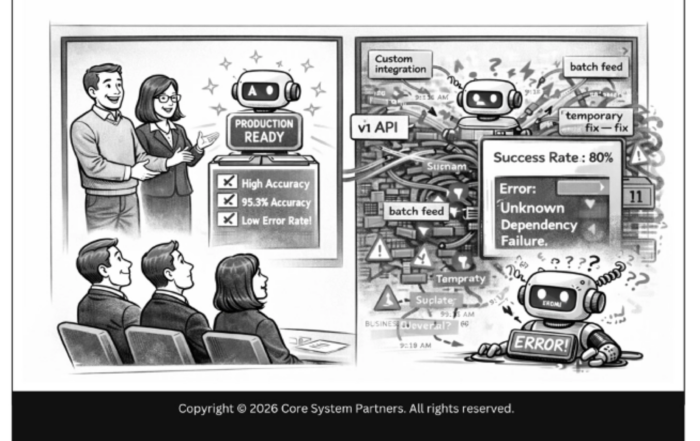

Banks that have done the architectural work described throughout this series — domain separation, governed APIs, event visibility, data ontology, embedded governance — will find that deploying agents is a configuration problem, not an architectural one. The APIs exist. The events flow. The governance infrastructure captures every decision. The escalation paths are built in. Adding a new agent means defining its domain scope, connecting it to existing APIs and event streams, configuring its escalation rules, and activating its audit trail. Weeks of work, not months.

Banks that have not done the work will find that every agent deployment is a custom integration project. Each agent requires its own data pipelines, its own API connections, its own governance workaround. The cost multiplies with every agent. The risk compounds with every workaround. The bank builds intelligence on a foundation of exceptions rather than standards.

Banks that have not done the work will find that every agent deployment is a custom integration project. The bank builds intelligence on a foundation of exceptions rather than standards.

The architectural investment described in this series is the dividend that pays off when agents arrive. And they are arriving now.

What Comes Next

Agents operate with models that evolve continuously — improving, degrading, being replaced by better versions, and being constrained by new regulations. The next article addresses what the architecture must support when model change is not an event but a constant: continuous intelligence, where the stack must accommodate weekly model updates without operational disruption.

Where to Start

If your bank is exploring AI agents — or already piloting them — the architectural readiness question is urgent. Agents that operate on ungoverned infrastructure create risk that scales with every workflow they touch.

The CSP Transformation Readiness Scorecard evaluates your architecture against the specific requirements of agent-based banking: API completeness for write operations, event fabric maturity, orchestration layer readiness, audit trail infrastructure, and human-in-the-loop enforcement. It gives leadership teams a structured view of agent-readiness gaps in under an hour, with clear prioritization of what to build first.

The agentic AI capabilities coming to banking in the next three years will require the four architectural prerequisites in this article. Banks that have them will deploy agents. Banks that do not will watch.

If your institution is ready to move from awareness to action, visit coresystempartners.com/contact to start the conversation.

Next article in the series: Preparing for a World of Continuous Intelligence

Return to: Why AI makes modern core banking architecture non-negotiable