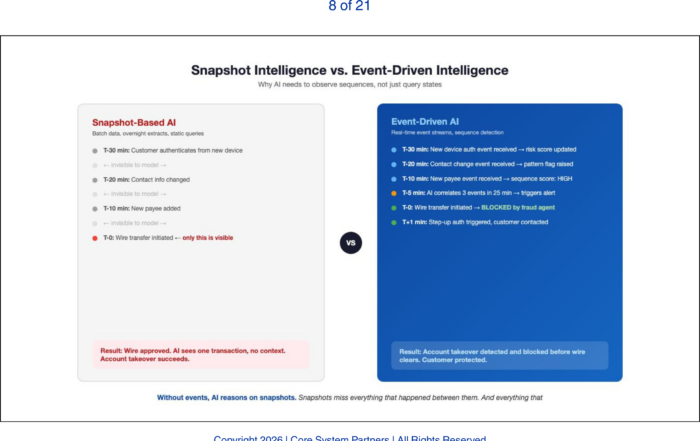

APIs let AI ask questions, but event-driven architecture lets it see what’s happening, making real-time intelligence possible across the bank.

The previous articles built the case for disciplined APIs and a unified event fabric as the infrastructure AI requires. Those articles described what good looks like. This article describes what failure looks like — because most AI initiatives do not fail in the model. They fail in the integration layer.

The pattern is consistent across banks of every size. A promising AI use case works beautifully in the lab. The model performs well on test data. The business case is solid. Then the team attempts to connect the model to production systems and discovers that the integration layer cannot support it. The project stalls, the budget expands, and the initiative either dies quietly or delivers a fraction of its promised value.

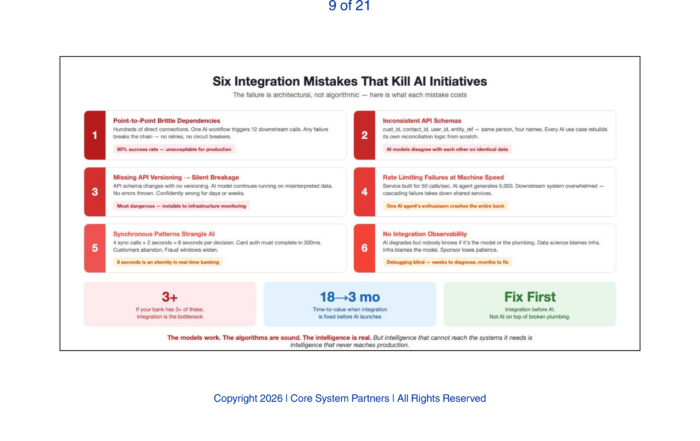

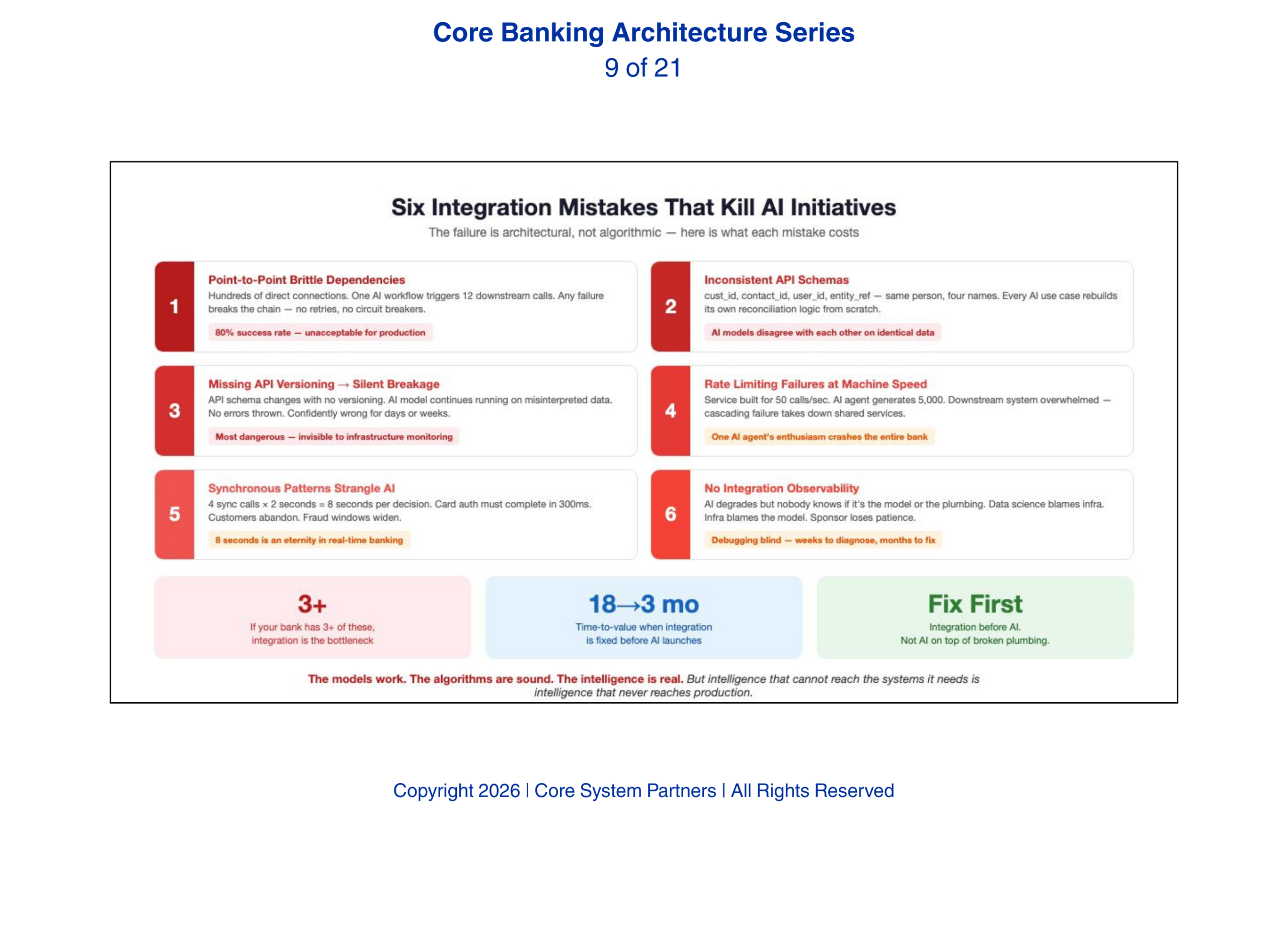

The failure is architectural, not algorithmic. Here are the six integration mistakes that kill AI initiatives, and what each one costs.

1. Point-to-Point Integrations That Create Brittle Dependencies

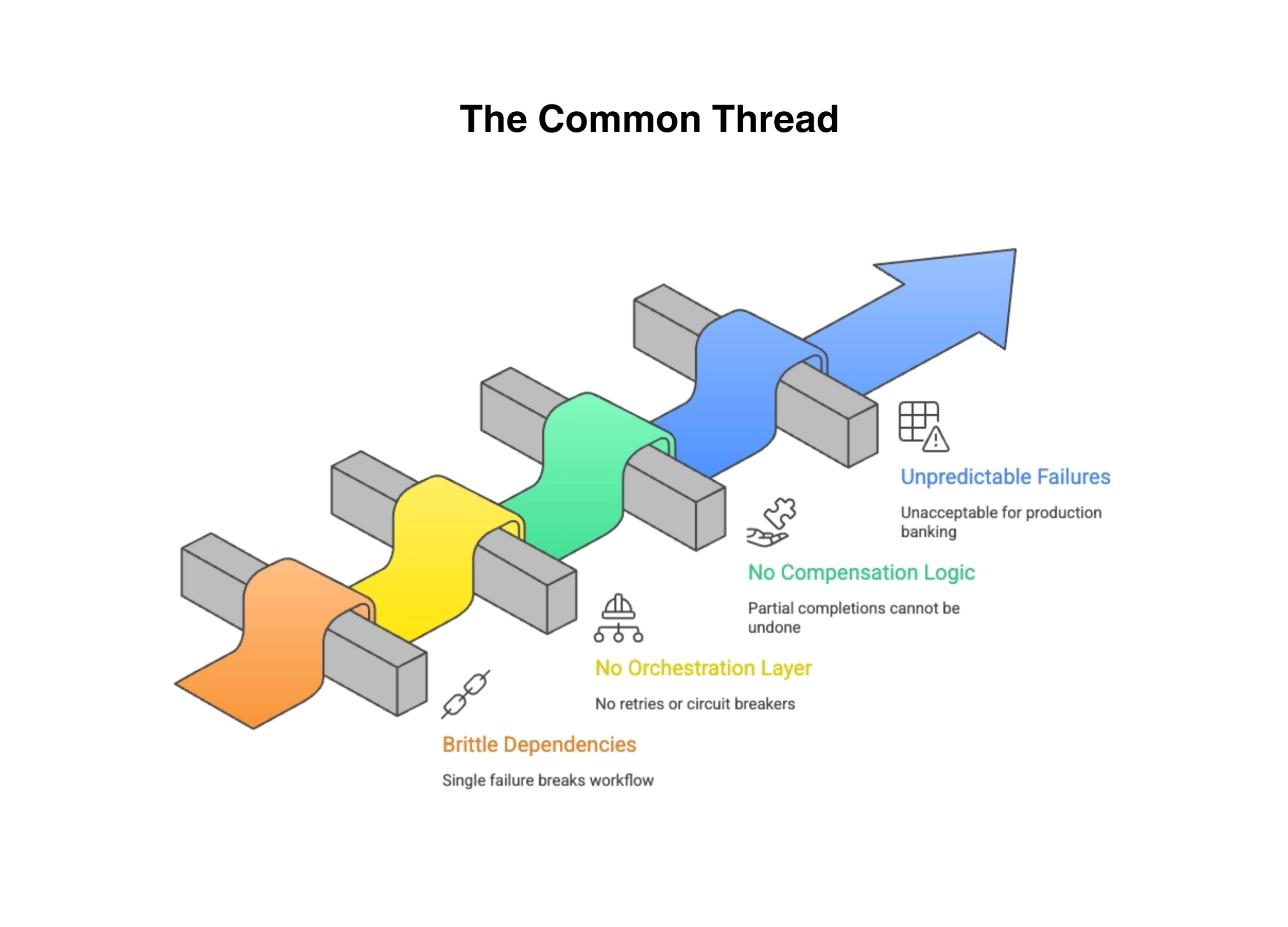

Most bank integration architectures were built one connection at a time over decades. System A needs data from System B, so a developer builds a direct connection. System C needs data from Systems A and B, so two more connections are built. After twenty years, the bank has hundreds of point-to-point integrations forming a web that nobody fully understands.

We have seen this exact pattern derail AI deployments. A single AI workflow — automated onboarding decisioning — triggers twelve downstream system calls: identity verification, credit bureau pull, OFAC screening, account opening, document generation, CRM update, regulatory reporting, fee schedule assignment, card issuance, online banking enrollment, welcome communication, and branch notification. If any one of those point-to-point connections fails, the entire workflow breaks. There is no orchestration layer to manage retries, no circuit breakers to prevent cascading failures, and no compensation logic to undo partial completions.

The result is an AI agent that works eighty percent of the time and fails unpredictably the other twenty percent. That failure rate is unacceptable for production banking operations.

Most AI initiatives fail not in the model, but in the integration layer that cannot support scale, reliability or real-time execution.

2. Inconsistent API Schemas That Prevent Data Fusion

AI depends on data fusion — combining data from multiple sources to build a complete picture. When every system uses different field names, data types, and entity definitions, fusion becomes a manual mapping exercise that must be repeated for every AI use case. The core system calls it cust_id. The CRM calls it contact_id. The digital platform calls it user_id. The fraud system calls it entity_ref. They all refer to the same person but there is no standardized way to join them without a custom mapping layer.

Every AI initiative that needs a unified customer view starts by building its own reconciliation logic. This is the same work, done differently, every time. It is expensive, error-prone, and produces inconsistent results across use cases. Over time, schema inconsistency degrades AI model performance in a specific way: models trained on reconciled data from one mapping logic produce different results than models trained on the same underlying data reconciled through a different mapping logic. The bank ends up with AI models that disagree with each other — not because the models are wrong, but because their inputs were reconciled inconsistently. The ontology problem from earlier in this series manifests here as an integration cost that multiplies with every new AI deployment.

3. Missing API Versioning and Silent Breakage

When an API changes its response format and there is no versioning discipline, AI models trained on the old format break silently. The model continues to run. It continues to produce outputs. But those outputs are based on misinterpreted data. A model trained to read a risk score from a field that now contains a different metric will produce confidently wrong assessments. No error is thrown. No alert fires. The model simply makes bad decisions until someone notices the outcomes — which could be days or weeks later.

Silent breakage is the most dangerous integration failure because it is invisible to infrastructure monitoring. The systems are up. The APIs are responding. The model is running. Everything looks healthy. The decisions are wrong.

Silent breakage is the most dangerous integration failure because it is invisible to infrastructure monitoring. The systems are up. The APIs are responding. The model is running. Everything looks healthy. The decisions are wrong.

4. Rate Limiting Failures at Machine Speed

AI agents call APIs at machine speed. A human user might call an API once per session. An AI agent might call it thousands of times per minute. Banks without rate limiting discipline discover this when an AI agent overwhelms a downstream system that was never designed for machine-speed consumption. The downstream system slows, then fails, then takes other systems with it. One AI agent’s enthusiasm brings down a shared service that affects the entire bank.

This is not hypothetical. It is what happens when an AI fraud detection agent, designed to query the customer profile API for every transaction in real time, is deployed against a customer profile service built for mobile banking volumes. The service was built for fifty calls per second. The AI agent generates five thousand.

5. Synchronous Patterns That Strangle AI Decision Loops

AI orchestration requires non-blocking calls — the agent issues a request and moves to the next task while waiting for the response. Banks with synchronous integration patterns force the agent to wait for each call to complete before proceeding. Four synchronous calls at two seconds each means eight seconds of latency for a single decision. In real-time banking operations, eight seconds is an eternity. Customers abandon transactions. Fraud windows widen. Compliance checks time out.

The fix is architectural: asynchronous integration patterns, event-driven communication where possible, and circuit breakers that prevent slow downstream systems from blocking the entire decision loop.

6. The Absence of Integration Observability

The sixth and perhaps most insidious mistake is the absence of integration observability. When an AI agent’s performance degrades — its decisions become less accurate, its response times increase, its error rate rises — the bank needs to determine whether the problem is the model or the integration layer. Without integration monitoring that tracks API response times, error rates, data quality, and schema consistency, the bank is debugging blind. The data science team blames the infrastructure team. The infrastructure team blames the model. The business sponsor loses patience with both.

Integration observability means monitoring the integration layer with the same rigor applied to infrastructure and application monitoring. It means dashboards that show API latency by endpoint, error rates by consumer, schema drift detection, and data quality metrics — all visible to the AI operations team, not just the infrastructure team.

The Common Thread

Every one of these mistakes is an integration problem, not an AI problem. The models work. The algorithms are sound. The intelligence is real. But intelligence that cannot reach the systems it needs, cannot trust the data it receives, and cannot act without breaking downstream dependencies is intelligence that never reaches production.

Banks that fix their integration layer before launching AI initiatives will deploy faster, fail less, and scale further. Banks that launch AI on top of broken integrations will add complexity to fragility — the worst possible combination.

AI initiatives fail for the same reason: weak integration foundations that cannot support reliable, scalable execution.

What Comes Next

With the infrastructure foundations established — architecture, data, APIs, events, and integration discipline — the series now turns to the governance and control layer. This is where the stakes shift from operational efficiency to institutional risk. The next article addresses the hardest truth about AI governance: it is an architectural problem, not a compliance form. Banks that try to govern AI through documentation alone will discover that governance without infrastructure is theater — and examiners are beginning to notice the difference.

Where to Start

The six integration mistakes in this article are diagnostic. If your institution recognizes three or more, the integration layer is the bottleneck that will slow or kill every AI initiative you attempt.

Core System Partners’ Transformation Readiness Scorecard includes an integration health assessment that maps your current integration patterns against the six failure modes described here — giving leadership teams a clear view of where to invest before the next AI pilot begins.

If any of the six integration patterns in this article describe your current architecture, your AI roadmap has a ceiling — and it is lower than the roadmap suggests.

If your institution is ready to move from awareness to action, visit coresystempartners.com/contact to start the conversation.

Next article in the series: AI Model Governance Is an Architectural Problem, Not a Compliance Form

Return to: Why AI makes modern core banking architecture non-negotiable

#CoreBankingTransformation #CoreBankingArchitecture