AI governance isn’t paperwork, it’s architecture. Without built-in traceability, validation and control, regulatory scrutiny will expose every gap.

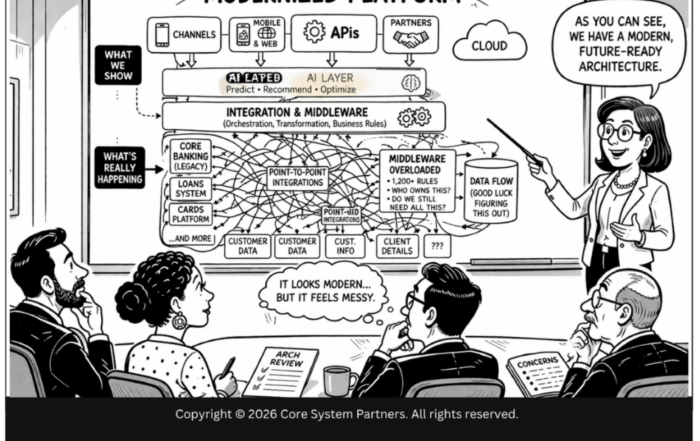

Here is what examiners are finding when they walk into a bank that has deployed AI without architectural governance: model inventories that are six months out of date, validation reports that describe a model version no longer in production, and audit trails that dead-end at a middleware layer nobody can trace through. The governance documentation looks thorough. The infrastructure behind it does not exist.

The previous article argued that AI governance is an architectural problem. This article addresses the external force that makes solving it urgent: regulatory expectations are evolving faster than most banks realize, and the questions examiners are asking have become architectural.

SR 11-7 established model risk management principles over a decade ago. It defined model risk, required independent validation, and mandated ongoing monitoring. Those principles were written for traditional statistical models — credit scoring, interest rate risk, ALLL calculations. They are now being extended to cover territory their authors never anticipated: agentic AI systems that make autonomous decisions, real-time decisioning engines that operate faster than human oversight can follow, and AI-orchestrated workflows that chain multiple models into complex decision sequences.

The examination questions are becoming architectural. And banks that cannot answer them architecturally will find themselves managing regulatory risk manually — which is unsustainable at AI scale.

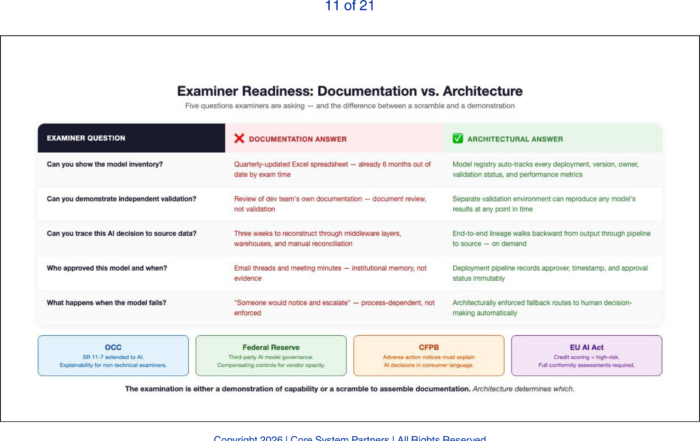

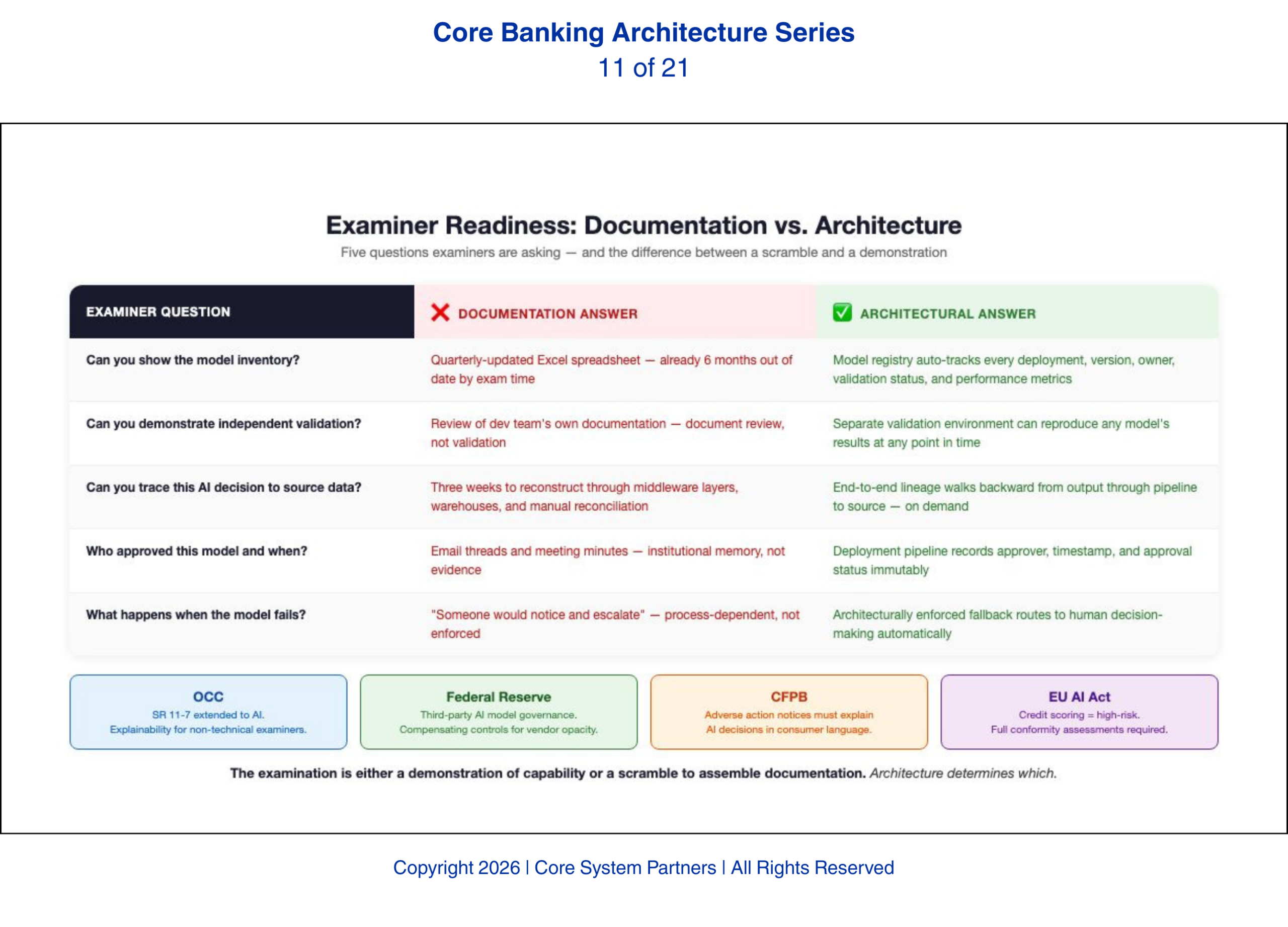

The Questions Examiners Are Asking

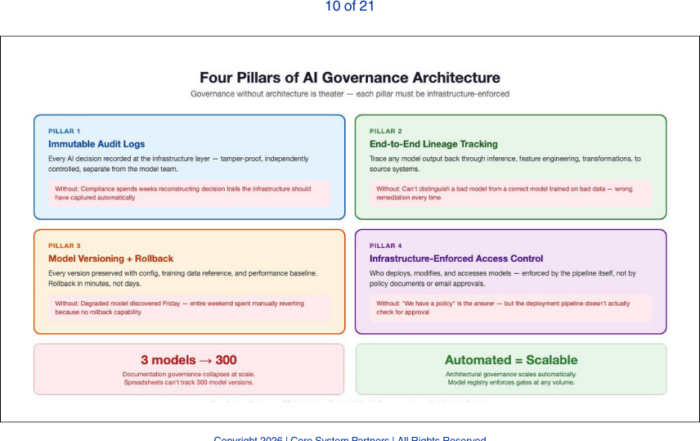

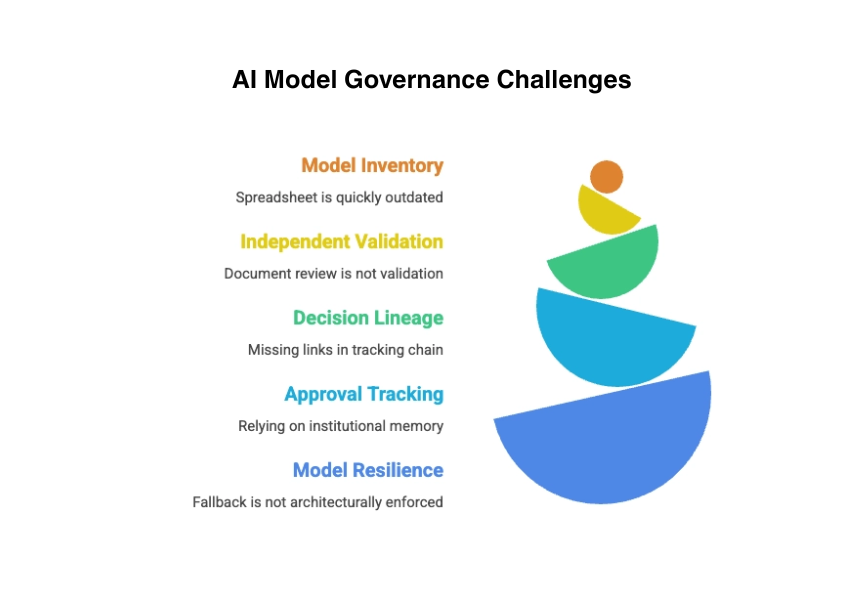

Can you show the model inventory? This sounds like a documentation question. It is not. At AI scale, maintaining a model inventory requires a model registry — an architectural component that automatically tracks every model deployed to production, its version, its owner, its validation status, and its performance metrics. Banks that maintain this inventory in a spreadsheet updated quarterly will find it is already outdated by the time the examiner asks for it.

Can you demonstrate independent validation? Validation requires the ability to reproduce a model’s results in an environment controlled by a team other than the model developers. This requires infrastructure: a separate validation environment, access to the same training data, the ability to reconstruct the model’s configuration at any point in time. Banks that validate models by reviewing the development team’s documentation are not performing independent validation. They are performing document review.

Can you trace a specific AI decision back to source data? This is the lineage question, and it requires the end-to-end tracking infrastructure described in the previous article. When an examiner points to a specific lending decision or fraud alert and asks why the AI made that call, the bank must be able to walk backward from the output through the model, through the feature pipeline, through the data transformations, to the source systems. If any link in that chain is missing, the trace fails.

Can you show who approved this model and when? This requires the infrastructure-enforced access control from the previous article. The deployment pipeline must record the approval, the approver, and the timestamp. If the approval process exists only in email threads and meeting minutes, the bank is relying on institutional memory rather than architectural evidence.

Can you demonstrate what happens when the model fails? This is the resilience question. The bank must show that model failure triggers a defined fallback — typically routing to human decision-making — and that this fallback is architecturally enforced, not dependent on someone noticing the failure and intervening manually

AI governance breaks down where documentation replaces architecture, exposing gaps in inventory, validation, lineage, approvals and resilience.

.

The Regulatory Signals

The OCC has been the most explicit about extending model risk management to AI. OCC Bulletin 2024-20 and subsequent supervisory communications have made clear that AI models are models under SR 11-7 regardless of their underlying technology. What the OCC is actually looking for in examinations is evidence that the governance framework is operational, not aspirational. The OCC expects banks to apply the same validation, monitoring, and governance standards to machine learning models that they apply to traditional models — with additional scrutiny for models that are difficult to explain or that operate with limited human oversight. The practical implication: if your AI model cannot produce an explanation that a non-technical examiner can follow, you have an explainability gap that the OCC will flag.

The Federal Reserve has focused on the third-party risk dimension, particularly through SR 13-19 and its evolving guidance on technology service provider oversight. Banks that use AI models provided by vendors or cloud platforms must demonstrate that they govern those models as rigorously as internally developed ones. This is particularly challenging because vendor-provided models are often opaque — the bank may not have access to the model’s architecture, training data, or internal logic. What the Fed is actually looking for is evidence of compensating controls: independent performance monitoring, output validation against known benchmarks, and documented contingency plans for vendor model failure.

The CFPB has taken a consumer protection angle, focusing on algorithmic decision-making in lending, pricing, and servicing. The bureau has made clear that adverse action notices must explain AI-driven decisions in terms consumers can understand. This requires explainability infrastructure — the ability to translate a model’s decision factors into plain language. Banks that deploy complex AI models for consumer-facing decisions without explainability capability are accepting significant fair lending risk. The practical question every bank should ask: if your AI denies a credit application, can your system generate an adverse action notice that cites the specific factors — not “the model determined insufficient creditworthiness” but the actual variables and thresholds that drove the decision?

For banks with European operations, the EU AI Act introduces additional requirements including mandatory risk classification, conformity assessments for high-risk AI systems, and transparency obligations. Credit scoring and lending decisioning are explicitly classified as high-risk under the Act, meaning any AI model used in these domains must meet the Act’s full compliance requirements — including human oversight, accuracy monitoring, and detailed technical documentation. US banks operating in the EU must comply with these requirements for their European operations, and the framework is likely to influence US regulatory thinking over the next three to five years.

Architecture as Regulatory Readiness

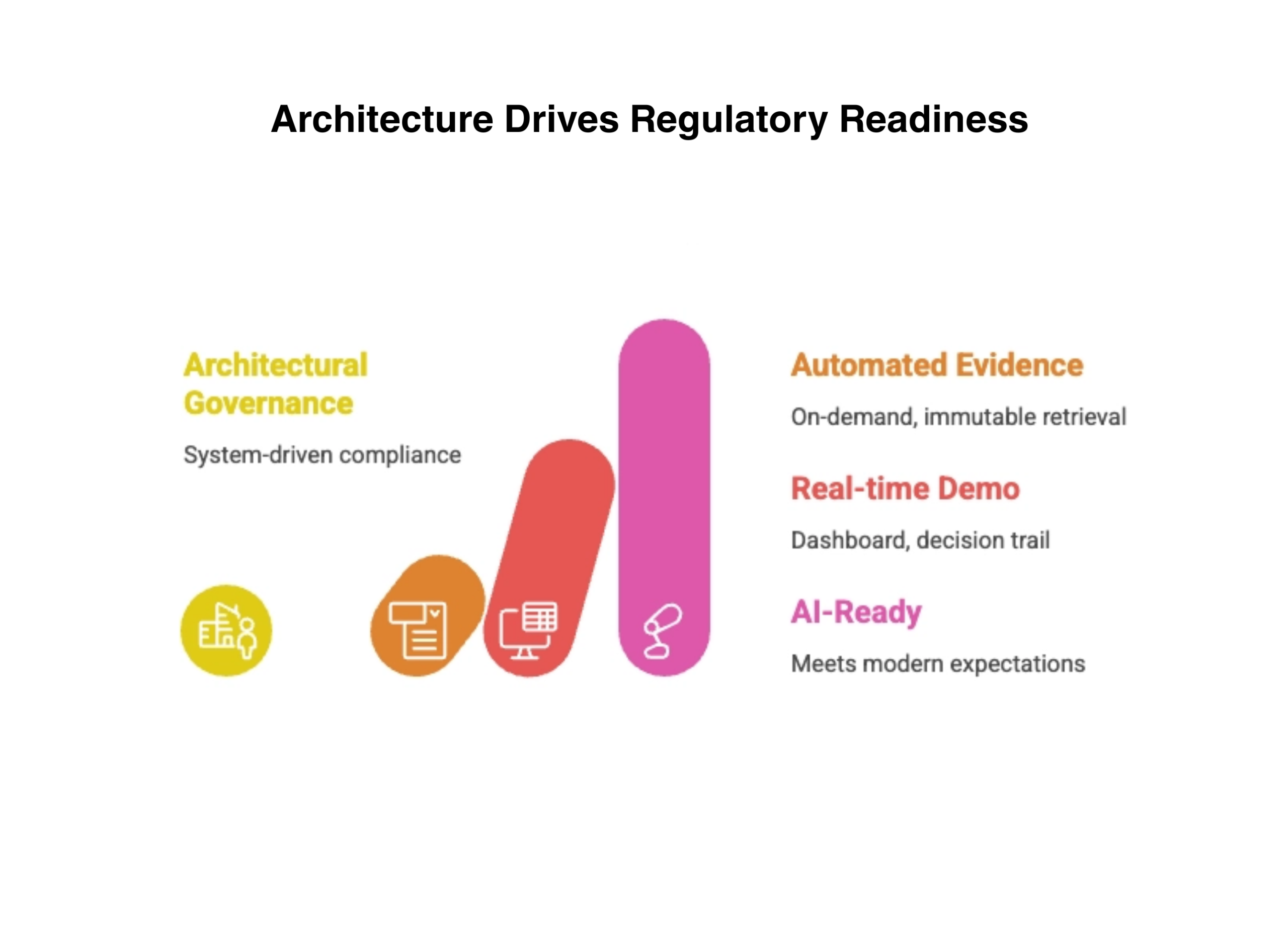

The common thread across every regulatory signal is that compliance requires capability, and capability requires architecture. A bank that can answer examiner questions only by pulling reports and interviewing developers is not compliant. It is managing examination risk manually. That approach works when the bank has three models. It collapses when the bank has thirty.

A bank that can answer examiner questions only by pulling reports and interviewing developers is not compliant. It is managing examination risk manually.

Banks that have built the governance architecture described in the previous article — model registries, lineage tracking, immutable audit logs, automated monitoring, infrastructure-enforced access control — can answer these questions from the system itself. The evidence is generated automatically, stored immutably, and retrieved on demand. The examination is a demonstration of capability, not a scramble to assemble documentation.

The difference in the examination room is stark. A bank with architectural governance pulls up a dashboard, walks the examiner through the decision trail, and shows the infrastructure controls in real time. A bank with documentation governance asks for two weeks to compile the information — and the examiner writes that down.

That is the difference between a bank that is ready for AI-era regulation and one that is merely compliant with yesterday’s expectations.

Regulatory readiness is no longer built on documentation, it is delivered through architecture, automation and real-time visibility.

What Comes Next

Governance and regulatory readiness address the control plane. But AI introduces operational risks that extend beyond model governance into the bank’s resilience framework. The next article examines why traditional business continuity planning is insufficient for AI-driven operations and what resilience actually looks like when the system is up but the model is wrong.

Where to Start

The five examiner questions in this article are not hypothetical. They are the questions being asked now. If your institution cannot answer all five from infrastructure rather than documentation, the regulatory gap is real and growing.

Core System Partners’ Transformation Readiness Scorecard includes a regulatory readiness assessment that maps your governance infrastructure against current OCC, Fed, and CFPB expectations — giving leadership teams a clear view of examination preparedness in under an hour.

The five examiner questions in this article are being asked now. If your institution cannot answer all five from infrastructure rather than documentation, that gap already has a timeline on it.

If your institution is ready to move from awareness to action, visit coresystempartners.com/contact to start the conversation.

Next article in the series: Operational Resilience in an AI-Driven Bank

Return to: Why AI makes modern core banking architecture non-negotiable

#CoreBankingTransformation #CoreBankingArchitecture