Modernize without disruption: the strangler pattern replaces legacy systems step by step—unlocking AI value early while reducing transformation risk.

Here is the question every bank executive wants to ask but most will not say aloud: is our core a dead end?

The previous article made the case for modularization as the fastest path to AI readiness. That argument holds for most banks. But not all of them. Some core architectures cannot be decomposed because they were never composed in the first place — they grew, and everything grew together. For those banks, the conversation must shift from how to modularize to when to replace and how to do it without losing half a decade.

The banking industry spent the last decade stigmatizing core replacement as too risky, too expensive, and too disruptive. That stigma was earned. We have sat across the table from executive teams still processing the institutional trauma of a failed conversion — careers derailed, hundreds of millions spent, and in a few cases the viability of the institution itself called into question. The risk is real. Anyone who tells you otherwise is selling something.

But AI has changed the calculus. The cost of deferral is no longer abstract. It is now measurable in specific AI capabilities foregone, competitive positions lost, and operational efficiencies that cannot be captured. Banks clinging to architectures that cannot support intelligent operation are not being conservative. They are accumulating a different kind of risk, the risk of irrelevance.

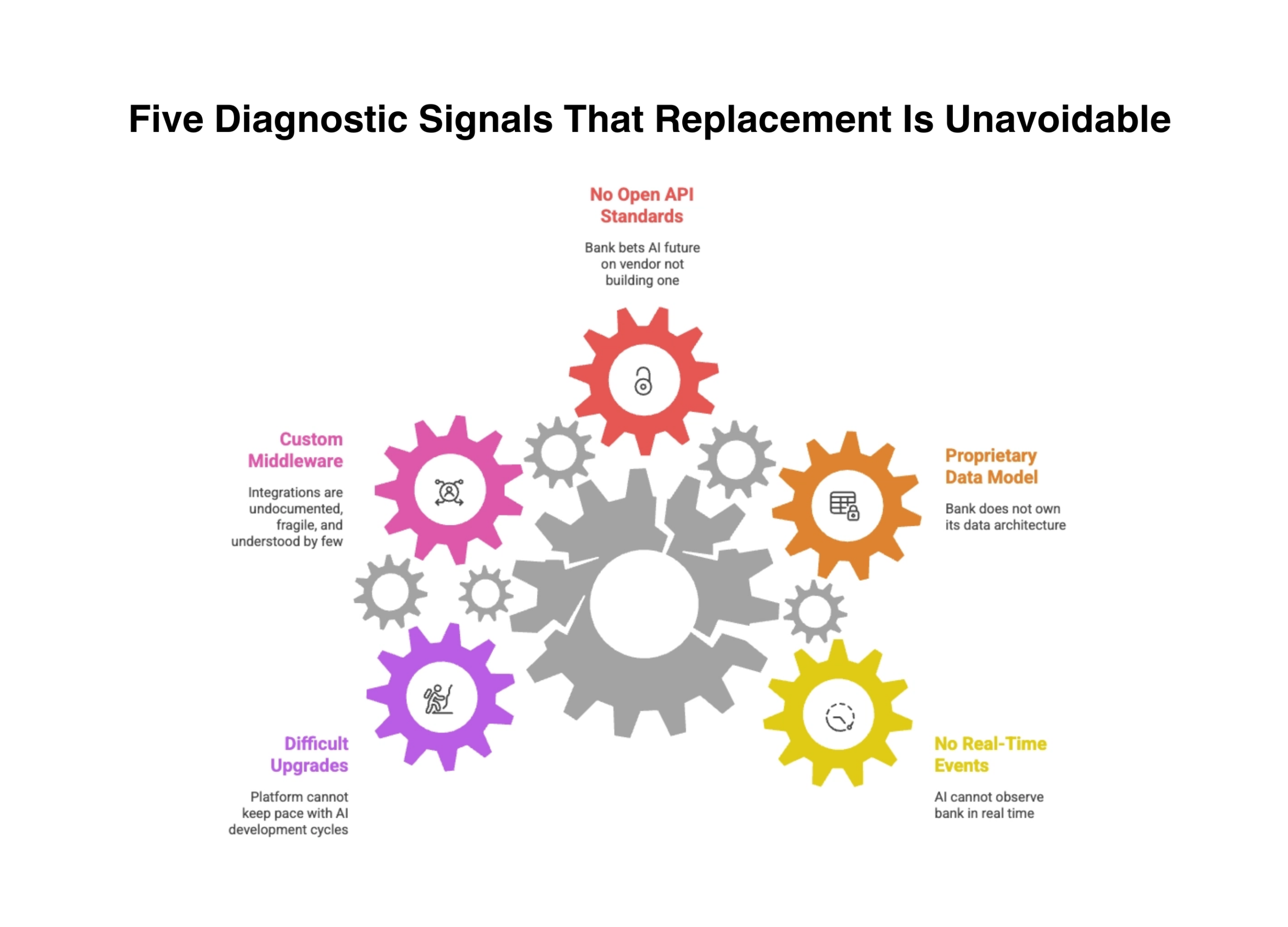

Five Diagnostic Signals That Replacement Is Unavoidable

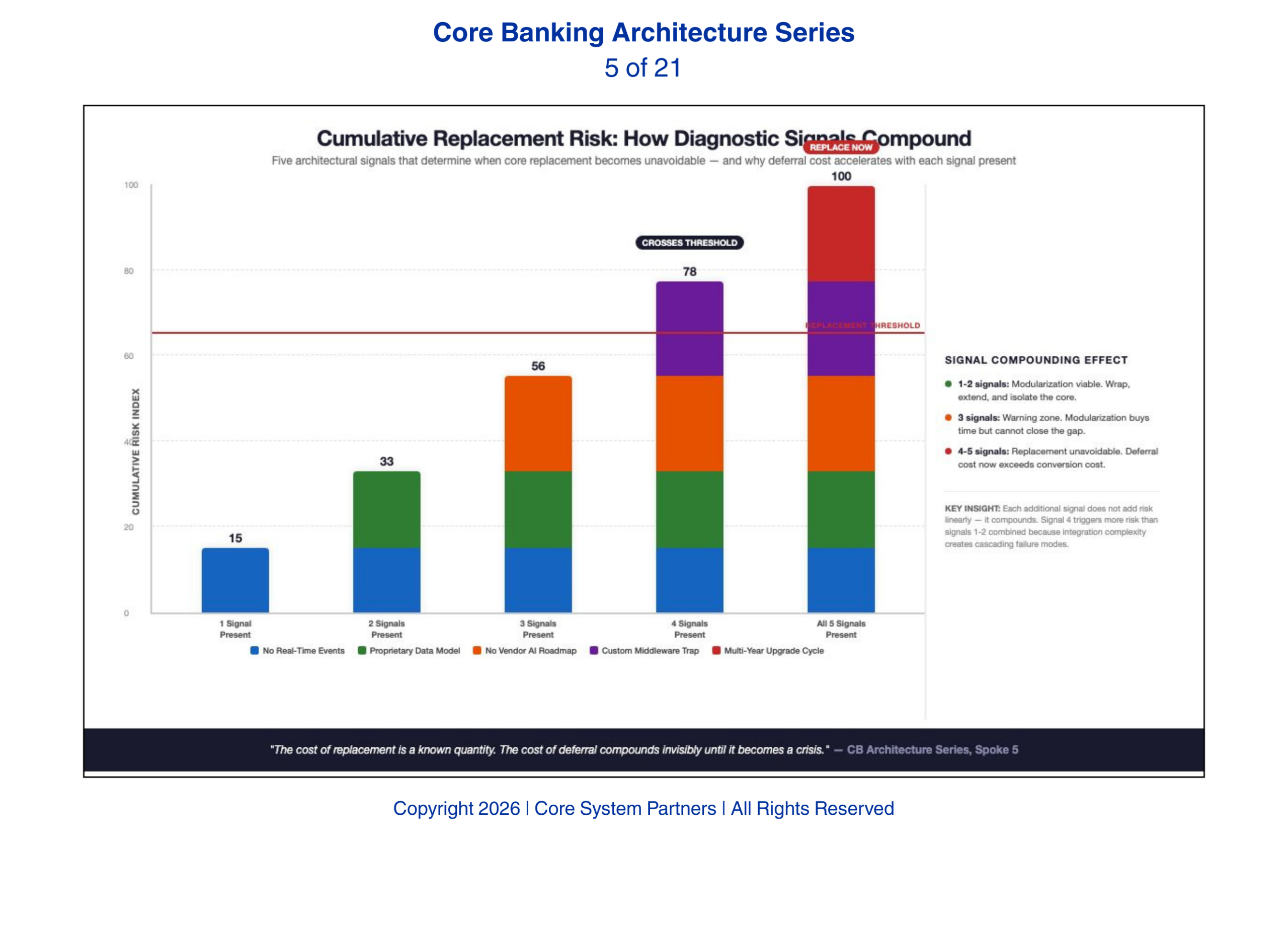

When these five signals appear, modularization may no longer be enough, core replacement becomes unavoidable.

Not every aging core system needs replacement. Many can be modularized, wrapped, and extended. The decision to replace should be driven by architectural evidence, not frustration. Here are the five signals that indicate modularization will not work and replacement is the only viable path.

First, the core does not support real-time event publishing at any level. Not as a feature that has not been enabled. Not as a capability that requires configuration. The architecture simply has no mechanism for publishing state changes as they occur. Every downstream system operates on batch extracts — nightly files, scheduled reports, periodic database pulls. If the core cannot emit events, AI cannot observe the bank in real time. No amount of middleware changes this. The limitation is architectural, and it is terminal. Banks that ignore this signal discover it during their first real-time fraud detection pilot, when the model works perfectly in the lab and is useless in production because it cannot see transactions as they happen.

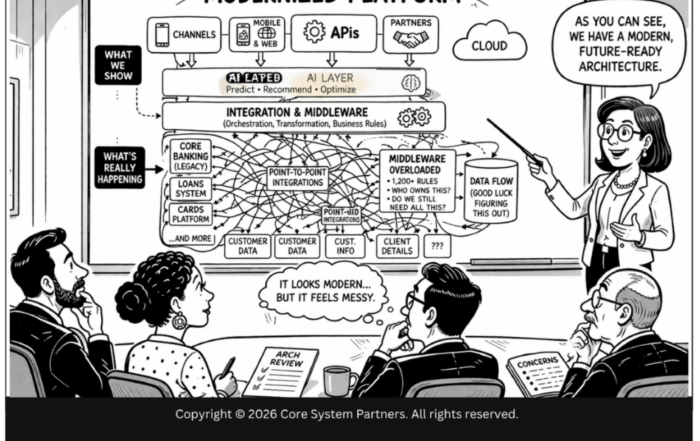

Second, the data model is proprietary and cannot be mapped to a canonical enterprise model without the vendor’s direct involvement. When the bank cannot understand its own data without calling the vendor, it does not own its data architecture. It rents it. AI requires data transparency, models must be trained on data the bank fully understands and controls. A proprietary data model that requires vendor interpretation for every integration is incompatible with AI at scale. We have seen banks spend six months negotiating data dictionary access with their vendor before a single AI model could be trained.

Third, the vendor roadmap does not include open API standards or AI integration capability. Some vendors have recognized the shift and are investing in openness. Others have not. If the vendor’s three-year roadmap does not include production-grade APIs, event publishing, and AI orchestration support, the bank is betting its AI future on a vendor that is not building one. The consequence is measurable: every AI use case the bank wants to deploy will require custom middleware, and that middleware becomes the bank’s most expensive and least governed architectural layer.

Fourth, every integration requires custom middleware that only the original implementer understands. This is the institutional knowledge trap. The bank has spent years building custom connectors, file translators, and batch scripts that link the core to everything else. These integrations are undocumented, fragile, and understood by a shrinking number of people. Adding AI to this ecosystem means adding more custom middleware, compounding the problem rather than solving it. When the two people who understand the middleware retire or leave, the bank discovers it has been running on institutional memory, not architecture.

Fifth, the system cannot be upgraded without a multi-year engagement. If moving from one version of the core to the next requires a dedicated project with its own budget, timeline, and risk assessment, the platform cannot keep pace with AI development cycles. AI models evolve in weeks. A core that evolves in years creates a permanent gap between what the intelligence layer needs and what the infrastructure provides. That gap does not shrink. It widens with every AI advancement the bank cannot adopt.

The Honest Cost of Replacement

Core replacement is expensive. For a community bank, the total cost, software, implementation, data migration, testing, training, and parallel run, typically ranges from fifteen to forty million dollars depending on complexity. For a regional bank, that number can exceed a hundred million. The timeline, even with disciplined execution, is three to five years from contract signing to full production cutover.

These numbers are real and they should not be minimized. But they must be weighed against the cost of staying. Banks that cannot deploy AI-powered fraud detection are absorbing fraud losses that AI would prevent. Banks that cannot offer real-time personalization are losing deposits to competitors that can. Banks that cannot automate compliance workflows are paying for manual processes that scale linearly with volume while AI-enabled competitors scale logarithmically.

The cost of replacement is a known quantity. The cost of deferral compounds invisibly until it becomes a crisis.

The cost of replacement is a known quantity. The cost of deferral compounds invisibly until it becomes a crisis.

Replacement and Modularization Are Not Opposites

The strongest core replacement strategies use modularization as a funding and de-risking mechanism. This is the article’s most important practical insight and it is the one most transformation frameworks miss entirely.

A bank does not have to replace everything at once. It can modularize the capabilities surrounding the core, payments, digital channels, data platforms, compliance engines, while planning the core replacement in parallel. Each modularized capability reduces the blast radius of the eventual core cutover. Each one delivers AI value in the interim.

This is the approach that works in practice. Modularize what you can. Fund the replacement with the operational savings and AI capabilities the modularization delivers. Execute the core replacement as the final step in a transformation that has already proven its value, not as a leap of faith that must justify itself after the fact.

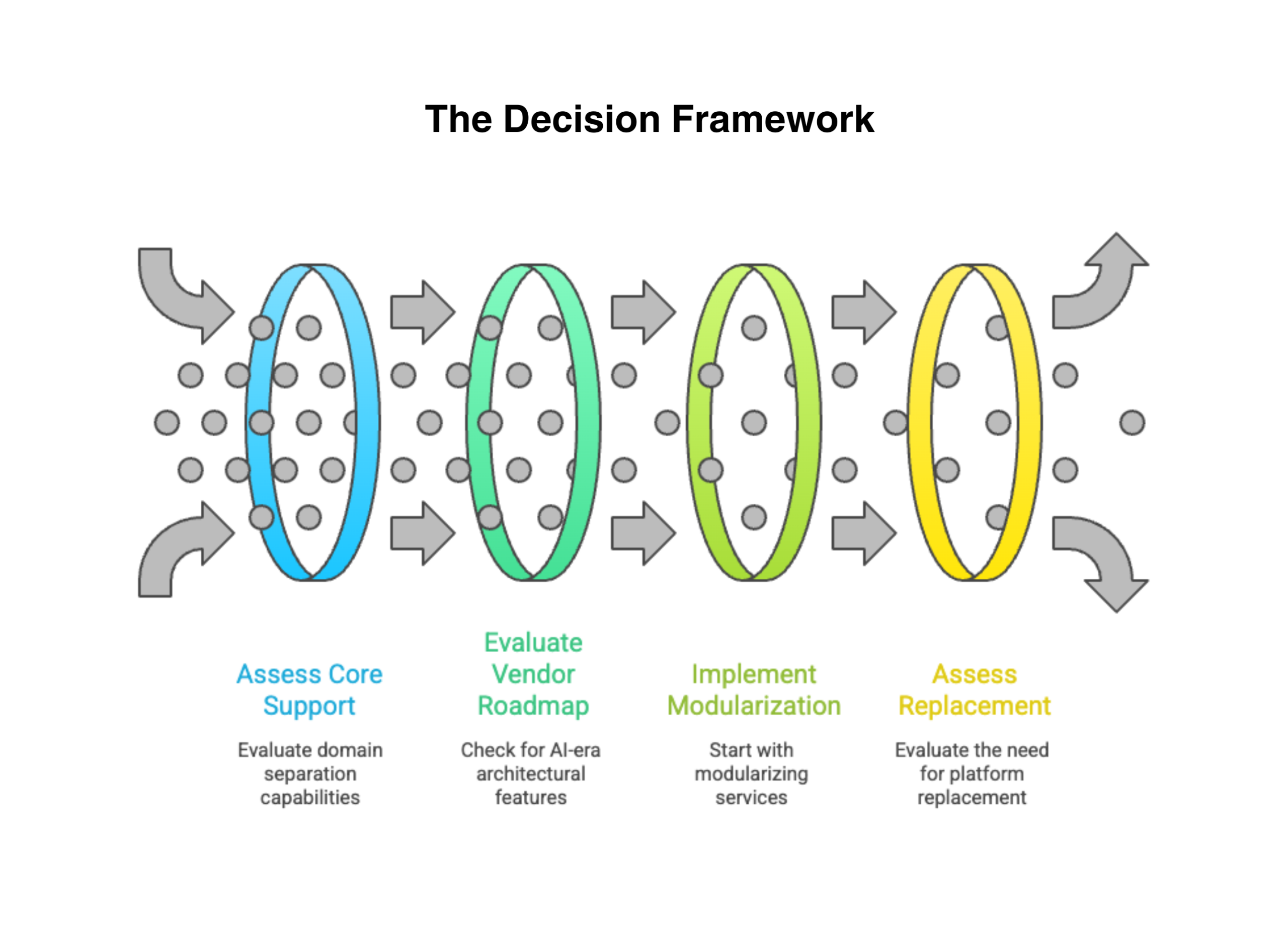

The Decision Framework

Banks facing this decision need a framework that is honest about both paths. The decision rests on two variables.

The first variable: can your core support domain separation? If the answer is yes, if you can extract domain services, publish events, and build clean interfaces without the entire monolith collapsing, modularization is the right first move.

The second variable: does your vendor’s roadmap include AI-era architectural capability? If the answer is yes, if the vendor is investing in open APIs, event publishing, and AI orchestration, the platform has a future you can build on.

When both answers are yes, modularize. When both answers are no, replace. When the answers are mixed, the sequencing matters: modularize what you can to reduce dependency on the core, then evaluate replacement once the blast radius is manageable.

Most banks will find they need elements of both. The critical mistake is treating replacement as an all-or-nothing decision. It is not. It is the final phase of a transformation strategy that begins with modularization, proves value incrementally, and executes the core cutover only when the surrounding architecture is strong enough to absorb the disruption.

What Comes Next

Whether a bank modularizes or replaces, the execution pattern matters as much as the decision itself. The next article examines the strangler fig pattern, an architectural approach, named for the tropical tree that gradually grows around and replaces its host, that lets banks modernize incrementally without stopping operations. It has become the essential execution tool for AI-ready transformation because it resolves the central tension: banks cannot stop running while they rebuild, but they cannot wait to start deploying intelligence.

Where to Start

If more than two of the five diagnostic signals above describe your institution, the conversation has shifted from whether to replace to when and how.

Core System Partners’ Transformation Readiness Scorecard gives leadership teams a structured assessment of where they stand across all five signals — and a clear view of whether modularization, replacement, or a phased combination is the right path. The assessment takes under an hour and produces actionable prioritization, not a slide deck.

If more than two of the five diagnostic signals above describe your institution, the question is no longer whether to replace, it is when and how to sequence the work.

If your institution is ready to move from awareness to action, visit coresystempartners.com/contact to start the conversation.

Next article in the series: The Strangler Pattern Reborn for AI Workloads

Return to: Why AI makes modern core banking architecture non-negotiable

#CoreBankingTransformation #CoreBankingArchitecture