AI-ready banking demands more than new tools—it requires modern architecture with clear domains, modular services, real-time events, unified data, built-in governance, and an operating model built for continuous change.

In the hub article for this series, we made the case plainly: AI has turned core banking architecture from a strategic improvement project into a survival requirement. The debate is over.

This article answers the next question. What does an AI-ready architecture actually require?

The answer is not exotic. It is six capabilities that disciplined architecture leaders have advocated for years. What changed is that AI made every one of them non-negotiable.

1. Clear Domain Separation

Deposits, lending, payments, and customer data must have defined ownership and boundaries.

This is the foundation. When AI needs to reason across products, it must know where one domain ends and another begins. Without clear boundaries, models ingest conflicting definitions, duplicated records, and ambiguous ownership. The output looks confident. The underlying logic is unreliable.

What this looks like in practice: Each domain owns its data, publishes well-defined contracts, and does not allow other domains to reach directly into its internals. A lending service does not query the deposit database. It requests what it needs through a governed interface.

2. Modular Services and Clean Interfaces

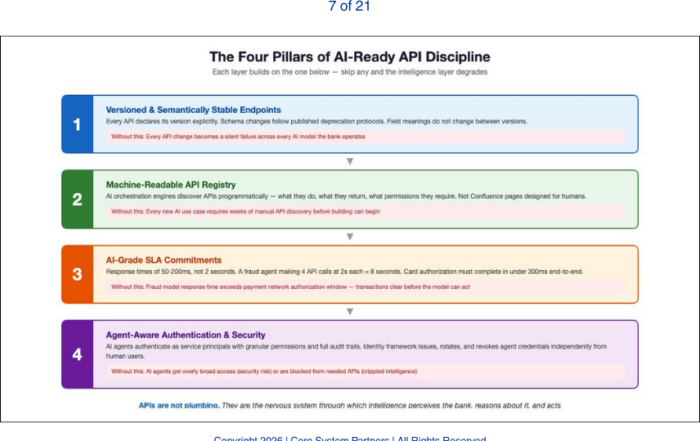

Secure, documented, versioned APIs. Not ad hoc point-to-point integrations.

AI agents and orchestration layers need predictable, stable interfaces. When every integration is custom, expanding an AI use case from one domain to another becomes a project unto itself. Scale becomes impossible.

What this looks like in practice: APIs are versioned, documented, and governed centrally. New consumers can onboard without reverse-engineering how a prior team wired a connection five years ago. Changes follow a lifecycle. Breaking changes are communicated, not discovered in production.

3. Event Visibility

The ability to detect and respond to business events in real time or near real time.

Intelligent systems do not operate on batch cycles. Fraud detection, dynamic pricing, next-best-action, and compliance monitoring all depend on knowing what just happened. If your architecture only surfaces business events through overnight extracts, your AI is always reacting to yesterday.

What this looks like in practice: A customer opens a new account, triggers a payment anomaly, or crosses a credit threshold. The event is published immediately. Downstream services, including AI models, can subscribe and act. The architecture supports event-driven patterns natively, not as an afterthought bolted onto batch infrastructure.

4. Unified Data Architecture With Shared Meaning

Strong data architecture requires both structural integrity and semantic clarity.

Most banks have data models. Tables, fields, relationships, constraints. Fewer banks have an enterprise ontology, a shared understanding of what entities mean across domains. What is a customer in retail versus commercial? What is an exposure in lending versus treasury? AI systems depend on both structure and meaning. Without semantic alignment, automation produces inconsistent outcomes at scale.

What this looks like in practice: A canonical definition of customer, account, product, and relationship exists and is enforced. When an AI model pulls data from multiple domains, it is not reconciling conflicting definitions at runtime. That reconciliation was done at the architecture level.

We will examine the distinction between data models and enterprise ontologies in a dedicated article later in this series.

5. Governance Embedded in the Stack

AI model auditability, traceability, and explainability must be supported by infrastructure.

This series references several types of models: data models that define structure, operating models that define how teams work, and AI models that drive intelligent automation. In this section, the focus is on AI models specifically.

Regulators are not asking whether you use AI. They are asking whether you control it. Can you trace an AI model’s decision back to source data? Can you reproduce its outcome? Can you demonstrate proper access controls over the model and its inputs? If the answer depends on manual documentation rather than architectural capability, governance will fail under pressure.

What this looks like in practice: Data lineage is captured automatically. AI model inputs and outputs are logged. Access controls are enforced through infrastructure, not spreadsheets. When a supervisor asks how a credit decision was made, the answer is retrievable, not reconstructed.

6. An Operating Model That Supports Continuous Change

Architecture must be a standing capability with cadence and authority.

AI does not arrive as a one-time project. Models evolve. Use cases expand. Regulatory expectations shift. If your architecture function only activates during major programs and goes dormant between them, you cannot support the pace that AI demands.

What this looks like in practice: Architecture has dedicated leadership, a regular review cadence, and decision-making authority. It is not a committee that reviews diagrams. It is an operational function that governs how the bank evolves its technology estate continuously.

The Baseline, Made Specific

These six capabilities are not aspirational. They are the minimum. Banks that possess them can deploy AI safely and scale it. Banks that lack them will find every AI initiative slower, riskier, and more expensive than it needs to be.

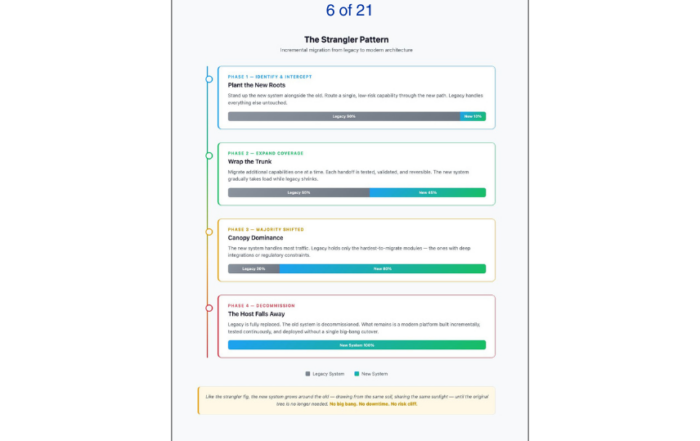

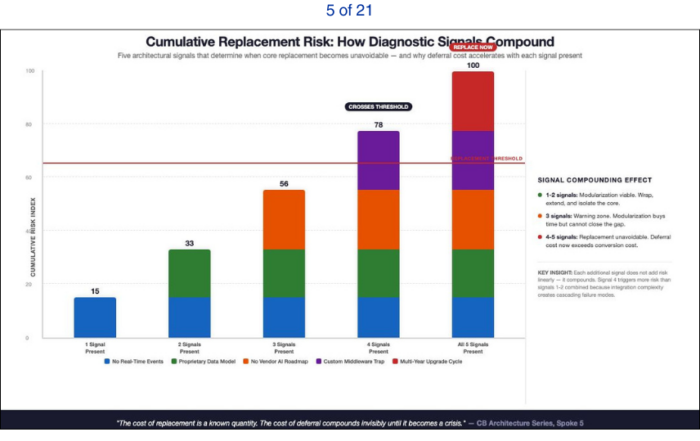

In the next article, we will examine modernization pathways: when you can evolve around an existing core, when replacement becomes the more honest answer, and how to make that decision based on architectural facts rather than vendor narratives.

If you want to assess where your institution stands across these six dimensions, explore the CSP Transformation Readiness Scorecard or schedule a working session.

The gap is measurable. And measurable gaps can be closed.

If your institution is ready to move from awareness to action, visit coresystempartners.com/contact to start the conversation.

Next article in the series: Why Patchwork Modernization Breaks Under AI

Return to: Why AI makes modern core banking architecture non-negotiable

#CoreBankingTransformation #CoreBankingArchitecture