The previous article established what to evaluate in a core banking vendor’s architecture when AI is the driving consideration. This article addresses the obstacle standing between bank executives and honest evaluation: AI-washing. Every core banking vendor now claims to be AI-ready. The claims are almost universally overstated. Distinguishing real capability from marketing fiction is now a core competency for bank leadership.

AI-washing is the practice of marketing AI capability that does not exist at the architectural level. Vendors have always overpromised on technology trends — cloud-washing preceded AI-washing, and digital transformation washing preceded that. But AI-washing is different in two critical respects. First, the gap between marketing and reality is wider than any prior cycle because AI capability depends on deep architectural foundations that cannot be retrofitted quickly. Second, the cost of getting fooled is higher because AI decisions compound — a bank that builds its AI strategy on a platform that cannot actually support it does not just lose the initial investment, it loses the compounding advantage that genuine AI capability delivers over the life of the contract.

A bank that buys an AI-washed platform expecting intelligent operation will discover, eighteen months and several million dollars later, that the intelligence was in the brochure, not in the system.

A bank that buys an AI-washed platform expecting intelligent operation will discover, eighteen months and several million dollars later, that the intelligence was in the brochure, not in the system.

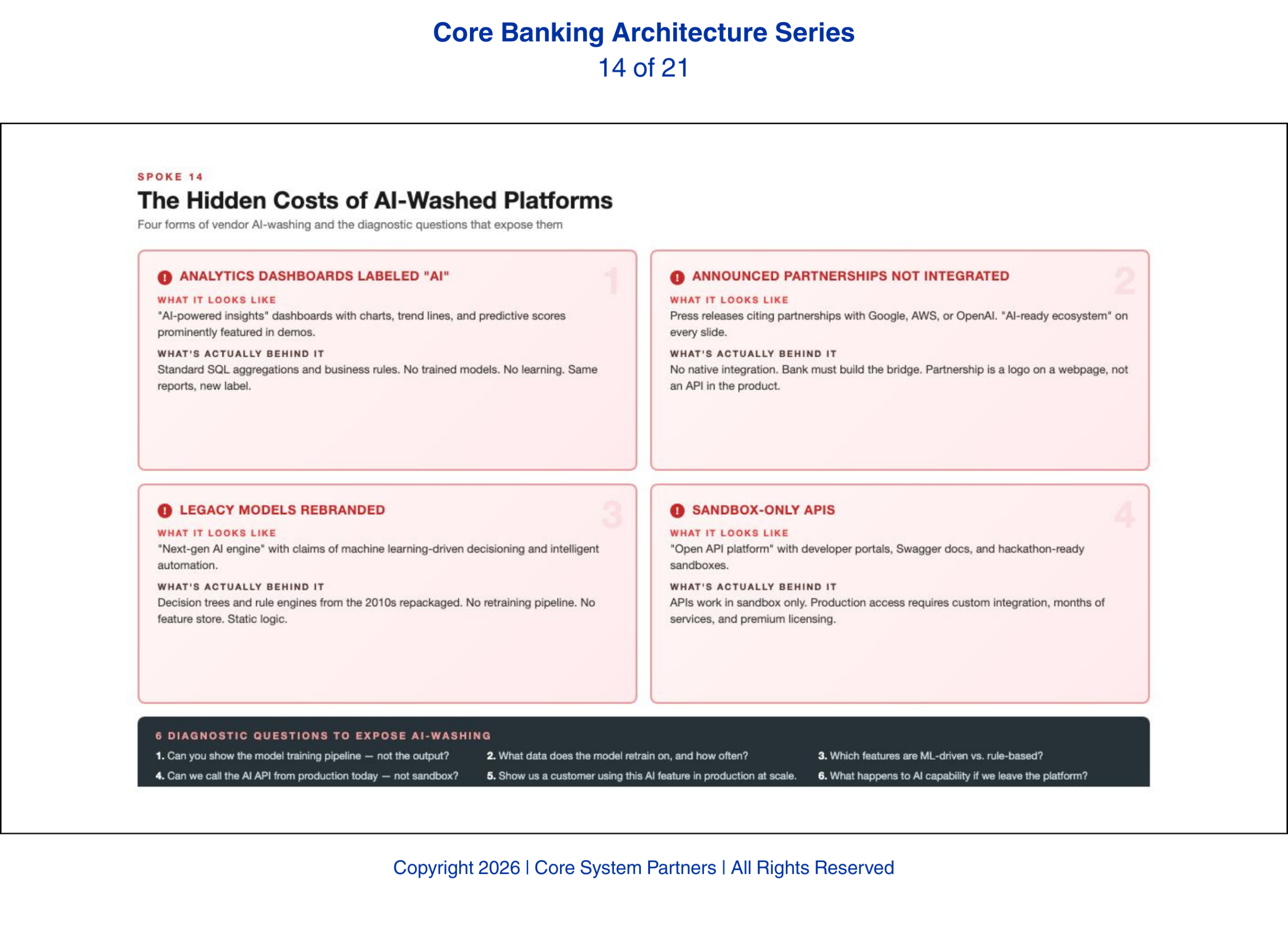

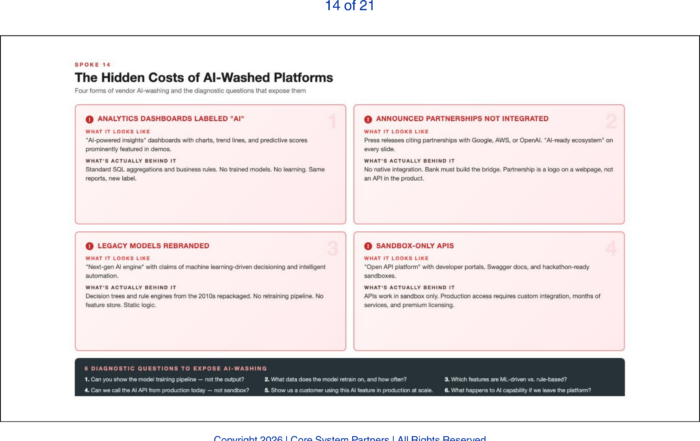

What AI-Washing Looks Like in Practice

The pattern we encounter most consistently is embedded analytics dashboards labeled as AI-powered. The vendor shows a dashboard with charts, trend lines, and summary metrics. The dashboard is described as AI-driven insights. In reality, the dashboard runs on batch-processed data using statistical methods that have been standard since the 1990s. There is no model. There is no learning. There is no inference. There is a SQL query with a chart on top, rebranded as artificial intelligence.

The second form — and we have seen this from vendors of every size — is announced partnerships with AI vendors that have not been architecturally integrated. The vendor issues a press release: strategic partnership with a major AI platform. The implication is that the core system now has AI capability. The reality is that the partnership exists as a contractual relationship, not a technical integration. The AI platform’s models cannot consume data from the core in real time. The core cannot act on the AI platform’s outputs through native APIs. The partnership is a business development exercise, not an engineering achievement.

The third form is legacy statistical models rebranded with AI language. Credit scoring models built twenty years ago are renamed as AI-powered decisioning. Rule-based fraud detection is repositioned as intelligent threat detection. These systems may work well for their original purpose. Calling them AI does not make them AI, and it does not mean the platform can support real-time inference, model orchestration, agent-based workflows, or continuous learning.

The fourth form is open API promises that exist only in the developer sandbox. The APIs work beautifully in the demo. In production, those same APIs have rate limits that prevent AI-scale consumption, stability issues that cause intermittent failures, or coverage gaps that require custom workarounds. The sandbox API and the production API are different products wearing the same name.

Six Diagnostic Questions That Expose AI-Washing

Bank executives evaluating vendor AI claims need specific, verifiable questions that cut through marketing language. These six questions separate vendors who have built the capability from those who have merely announced it.

Show me the real-time event publishing capability in production at a reference client. Not in a demo. Not in a sandbox. In production, at a bank that is actually running on your platform. If the vendor cannot produce a reference client who can attest to real-time event publishing in a production environment, the capability does not exist at production grade.

Let me see the API documentation and test the API stability over thirty days. Not a demo with curated test data. A thirty-day period where the bank’s technical team exercises the APIs under realistic conditions and measures response times, error rates, and schema consistency. Any vendor confident in their API maturity will agree to this. Any vendor that resists is telling you something.

Explain how your data model interacts with an external AI orchestration layer. This question tests whether the vendor has actually thought about AI integration at the architectural level. A vendor with genuine AI capability can explain the data flow: how an external model accesses core data, how it receives events, how it triggers actions through the core’s APIs, and how the data model maps to the bank’s enterprise ontology.

Show me a production example of a third-party AI model trained on your platform’s data. This is the proof of concept that matters. If no bank has successfully trained an external AI model on data from this platform in a production environment, the platform’s AI-readiness is theoretical. Theoretical capability does not survive contact with production reality.

What is your upgrade cadence and how do upgrades affect API compatibility? Quarterly upgrades with backward-compatible APIs indicate a mature platform. Annual upgrades that break API compatibility indicate a platform where AI development will be constantly disrupted by infrastructure instability.

The Data Ownership Question

The sixth diagnostic question deserves its own section because its implications extend beyond vendor evaluation into the foundation of every AI initiative the bank will ever pursue: who owns the data — the bank or the platform?

If the vendor’s licensing terms restrict the bank’s ability to extract, transform, and use its own data for AI training and inference, the bank’s AI capability is contractually limited before a single model is built. We have reviewed vendor contracts where the data extraction clauses were written in an era when the only use case for bulk data extraction was a core conversion. Those clauses were never designed to accommodate continuous AI training pipelines that pull data daily, or model validation processes that require full historical datasets, or agent workflows that need real-time access to transactional records.

Data ownership also determines model portability. If a bank trains a churn prediction model on data extracted from Platform A and later migrates to Platform B, can the model travel? If the training data was licensed rather than owned, the answer may be no — and the bank’s AI investment walks out the door with the old vendor relationship. Data ownership is not a legal technicality. It is the strategic foundation that either enables or constrains every AI initiative the bank will pursue.

The Cost of Getting Fooled

A bank that selects an AI-washed platform pays twice. It pays the contract cost for a platform that promised AI capability. Then it pays the remediation cost to build the AI infrastructure the platform lacks — custom integration layers, middleware event bridges, external data platforms that compensate for the core’s limitations. The second cost often exceeds the first.

Worse, the bank pays in time. The eighteen months spent discovering the platform’s limitations and building workarounds is eighteen months of AI capability foregone. Competitors who selected vendors with genuine architectural capability spent those same months deploying intelligence. In a market where AI advantages compound, eighteen months is not a delay — it is a permanent gap.

What Comes Next

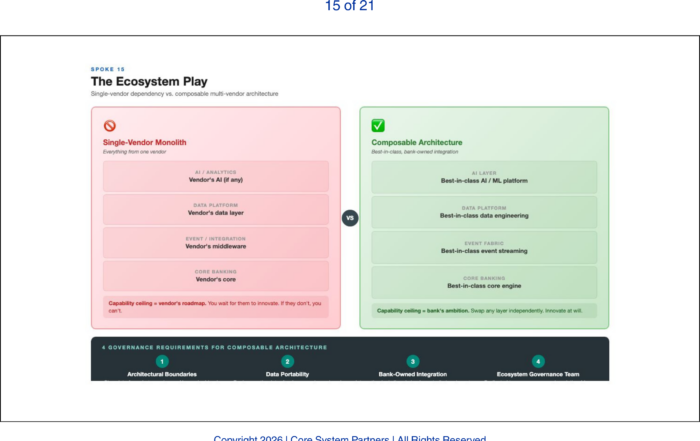

Even vendors with genuine AI capability cannot do everything. The single-vendor model — one platform does it all — is fundamentally incompatible with AI-era banking. AI capability arrives through specialized models, third-party services, and marketplace integrations that no single vendor can provide alone. The next article examines why banks must build composable, multi-vendor ecosystems and what governance is required to prevent ecosystem complexity from becoming ecosystem chaos.

Where to Start

If your bank is evaluating vendor claims, preparing for a renewal, or suspecting that the AI capability you were promised has not materialized, the diagnostic questions in this article are your starting point — but they require architectural context to interpret the answers.

The CSP Transformation Readiness Scorecard includes a vendor AI-readiness assessment that maps your current vendor’s architectural capabilities against the six diagnostic criteria outlined above. It identifies where the gaps are, which gaps can be bridged with middleware, and which require contract-level intervention. Leadership teams get a structured view in under an hour, with clear prioritization of where to act first.

Before your next vendor renewal, run the six diagnostic questions in this article. If more than two answers are unsatisfactory, you may be paying for AI marketing rather than AI capability.

If your institution is ready to move from awareness to action, visit coresystempartners.com/contact to start the conversation.

Next article in the series: The Ecosystem Play: Why No Bank Can Build Its AI Future Alone

Return to: Why AI makes modern core banking architecture non-negotiable

#CoreBankingTransformation #CoreBankingArchitecture