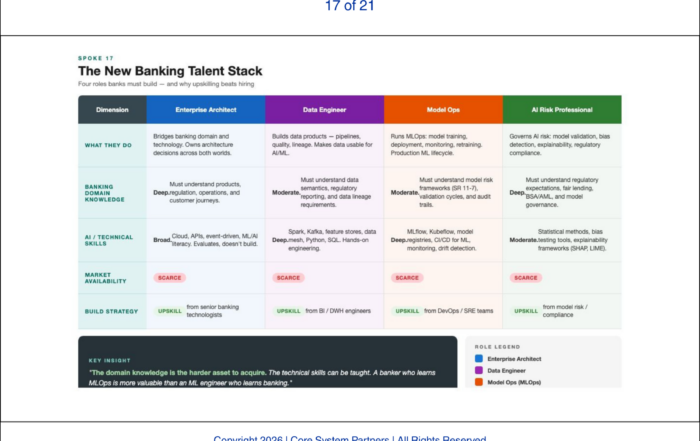

AI readiness in core banking starts with vendor architecture, this scorecard separates real capability from AI-washing.

How AI Reshapes Vendor Selection for Core Banking

For thirty years, core banking vendor selection was primarily a functional exercise. Can the system handle your products? Can it support your volume? Can it meet your regulatory requirements? Can the vendor’s implementation team convert your data and train your staff? These questions still matter. But AI has added a dimension that now dominates the selection decision: can the vendor’s architecture support intelligent operation?

This is a harder question than it sounds. Every vendor will answer yes. The evidence rarely supports the claim. We have sat through enough vendor demonstrations to know the pattern: a polished slide deck showing AI capabilities, a carefully scripted demo in a sandbox environment, and a reference client who deployed one AI use case under ideal conditions. What we rarely see is production-grade evidence — stable APIs under load, real-time event streams in live banking environments, or a data model transparent enough for a bank’s own data team to work with independently.

The vendor’s architecture matters more than the bank’s own architecture in one critical respect: the bank inherits the vendor’s limits. A bank can build perfect API discipline, a unified event fabric, and a governed data ontology across every system it controls. None of that matters if the core — the system of record for deposits, loans, and customer relationships — is a black box that cannot publish events, expose stable APIs, or share its data model transparently.

The API Surface

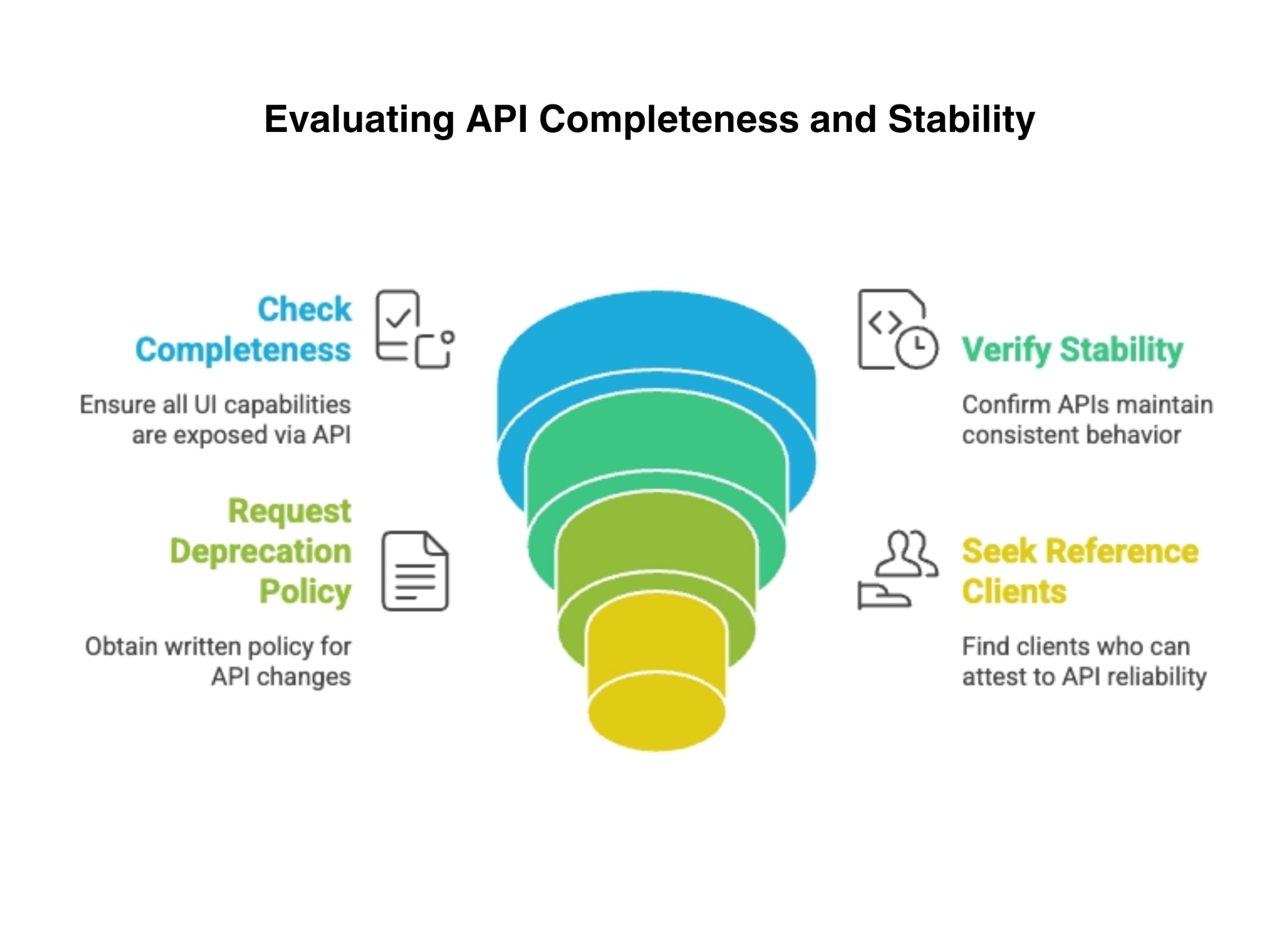

The completeness and stability of the vendor’s published API surface is the first evaluation criterion. Completeness means every capability available in the core’s user interface is also available through APIs. If a banker can perform an action through the core’s screens but an AI agent cannot perform the same action through an API, the architecture is incomplete. The AI layer is limited to the subset of banking operations the vendor chose to expose.

Stability means the APIs do not change their behavior, response schemas, or semantics between versions without a documented deprecation process. AI agents are trained on specific API behaviors. Undocumented changes break them silently.

What to ask: Demand the vendor’s published API catalog and count the endpoints against the core’s functional menu. Ask for the deprecation policy in writing. Ask for reference clients who can attest to API reliability in production over a twelve-month period — not in a demo, in production. What happens when this criterion is not met: the bank discovers during its first AI pilot that the workflow it needs to automate requires screen-scraping or manual workarounds because the vendor never exposed that function through an API. The pilot stalls. The business case erodes.

A structured approach to assessing API readiness, ensuring completeness, stability, governance and real-world reliability before core banking integration.

Real-Time Event Publishing

The ability to subscribe to real-time events from the core is the second criterion. As established in the earlier article on event-driven architecture, AI systems reason over sequences of events. A core system that only provides data through batch extracts or scheduled reports limits AI to reasoning on snapshots — yesterday’s data informing today’s decisions.

The vendor must demonstrate event publishing in production, not in a demo environment. Events must be business-meaningful — customer opened an account, payment cleared, loan entered delinquency — not database change logs. And the event schema must be documented, stable, and governed by the same versioning discipline that applies to APIs.

What to ask: Request a list of published event types and ask which ones are available in production today versus on the roadmap. Ask how event schemas are versioned and what happens to downstream consumers when a schema changes. What happens when this criterion is not met: the bank’s AI fraud detection system cannot react to transactions in real time because the core only provides end-of-day batch files. The bank is detecting fraud on yesterday’s transactions while the money has already moved.

Data Model Transparency

The transparency and flexibility of the core data model is the third criterion. AI models need to be trained on data the bank understands completely. A proprietary data model where field definitions, entity relationships, and business rules are hidden inside the vendor’s documentation — or worse, inside the vendor’s institutional knowledge — makes AI development dependent on vendor cooperation for every new use case.

We have seen what an opaque data model looks like in practice. The bank’s data science team pulls a customer relationship table to train a churn prediction model. They find twelve columns with cryptic field names, no documentation on which fields are derived versus raw, and a business rule embedded in the application layer that filters inactive accounts before the data ever reaches the extract. The model trains on filtered data without knowing it. The predictions are wrong, and the bank spends weeks debugging a data problem that was actually an architecture problem.

What to ask: Can your data team map the vendor’s data model to your enterprise ontology without calling the vendor for interpretation? Can you extend the data model for AI-specific attributes without a vendor enhancement request? What happens when this criterion is not met: every new AI use case requires a vendor consulting engagement to interpret the data, adding cost and weeks of delay to every initiative.

The Ecosystem and Upgrade Cadence

The vendor’s AI partnership ecosystem reveals how seriously the vendor takes AI enablement. A vendor with active integrations with major AI platforms, data engineering tools, and model deployment frameworks is building for AI-era banking. A vendor whose partnership page lists only traditional consulting firms and niche banking technology companies has not made the shift.

Upgrade cadence is equally telling. A vendor that requires eighteen-month upgrade cycles cannot support the continuous model deployment that AI demands. AI models evolve in weeks. The platform they operate on must be able to evolve on a comparable cadence — not necessarily weekly, but certainly quarterly.

What to ask: How frequently do production upgrades occur? What does the upgrade process require from the bank? Do upgrades affect API compatibility or event schema stability? What happens when this criterion is not met: the bank develops an AI model that requires a platform capability the vendor has roadmapped for the next major release — fourteen months away. The model sits on the shelf.

When to Apply These Criteria

Banks currently in contract negotiations or approaching renewal cycles should treat AI architectural capability as a primary evaluation criterion, not a secondary one. The traditional selection criteria — functional coverage, volume capacity, regulatory compliance — remain necessary. They are no longer sufficient.

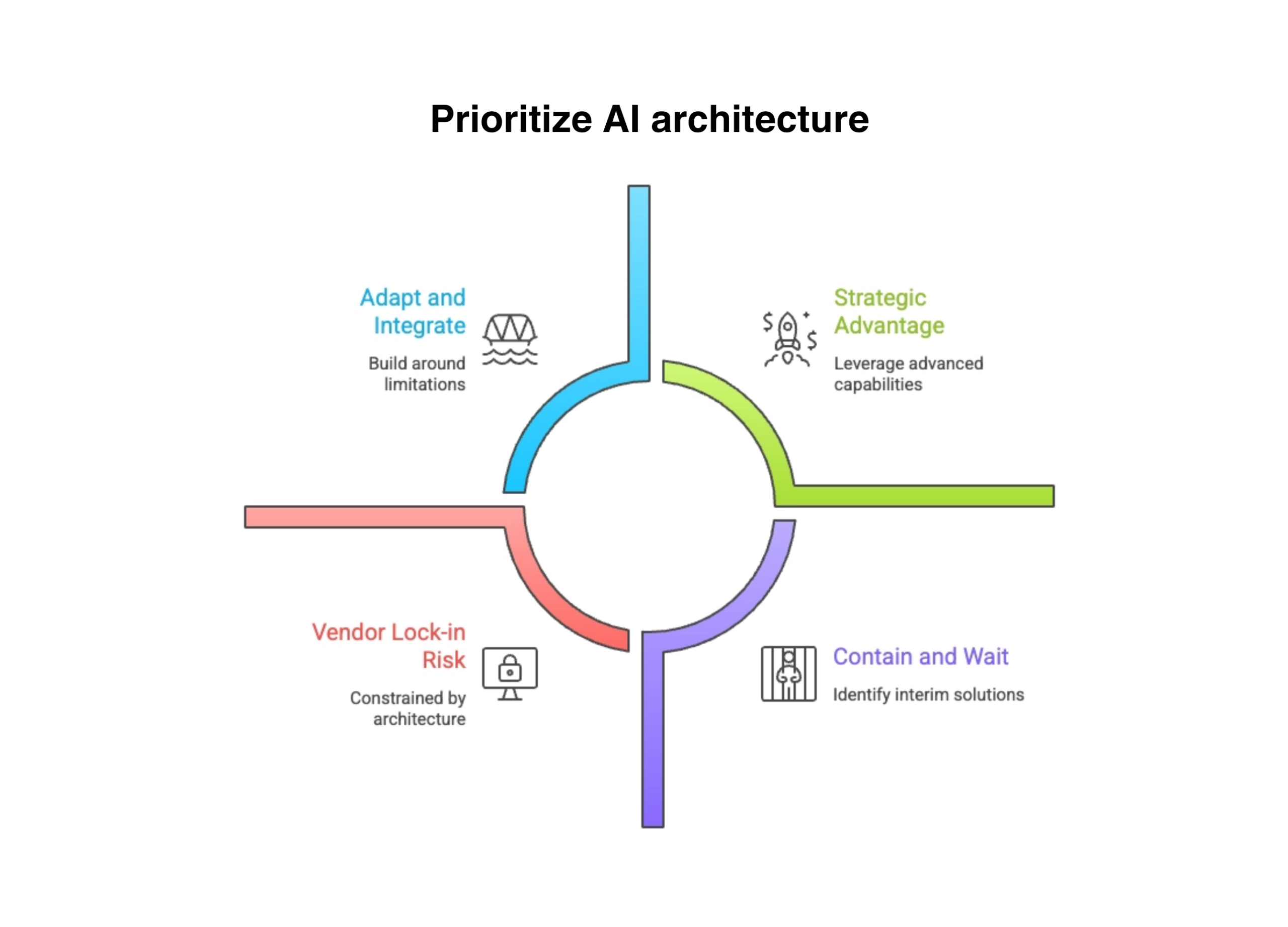

But what about banks locked into long-term contracts with years remaining? This is not a hypothetical — it describes a significant share of the community and mid-tier banking market. For these institutions, the strategy is not resignation. It is architectural containment: identifying which AI capabilities can be built around the core’s limitations, which require middleware to bridge the gaps, and which must wait for the next contract cycle. The worst response is to assume nothing can be done until the contract expires. The second worst is to pretend the limitations do not exist.

A bank that selects a vendor with excellent functional coverage but a closed architecture is making a decision that will constrain its AI capability for the life of the contract — typically seven to ten years. In seven years, AI-enabled competitors will have compounded their advantages through continuous intelligent operation. The bank locked into an AI-incompatible core will still be running batch analytics on yesterday’s data.

The vendor selection decision is now an AI strategy decision. Banks that treat it as anything less are ceding their AI future to a vendor that may not share their ambition.

The vendor selection decision is now an AI strategy decision. Banks that treat it as anything less are ceding their AI future to a vendor that may not share their ambition.

Not all AI decisions are equal, this framework helps balance innovation, risk and timing when prioritizing AI architecture in core banking.

What Comes Next

Evaluating vendor architecture requires the ability to distinguish genuine AI capability from marketing claims. The next article addresses AI-washing — the practice of marketing AI readiness that does not exist at the architectural level — and provides bank executives with the diagnostic questions that separate vendors who have built the capability from those who have merely announced it.

Where to Start

If your bank is evaluating core vendors, renewing a contract, or trying to build AI capability on top of a platform you did not choose with AI in mind, the vendor’s architecture is either enabling your strategy or constraining it. The question is whether you know which one.

The CSP Transformation Readiness Scorecard includes a vendor architecture assessment that evaluates API completeness, event publishing maturity, data model transparency, and ecosystem alignment against the criteria outlined in this article. It gives leadership teams a structured view of where their vendor relationship supports AI ambitions and where it creates constraints — in under an hour, with clear prioritization of where to act first.

Before your next core banking RFP, apply the four criteria in this article to every vendor on your shortlist. The answers will separate the AI-ready platforms from the ones that will require you to build around them for the next decade.

If your institution is ready to move from awareness to action, visit coresystempartners.com/contact to start the conversation.

Next article in the series: The Hidden Costs of AI-Washed Platforms

Return to: Why AI makes modern core banking architecture non-negotiable

#CoreBankingTransformation #CoreBankingArchitecture