Everything discussed in this series so far — modularization, APIs, events, governance, vendor strategy — requires execution. And execution requires an operating model that can sustain it. This is where most banks fail. Not in strategy. Not in technology selection. In the organizational machinery that translates architectural decisions into operational reality.

Architecture governance in most banks is episodic. It happens when something breaks. It happens when a major project starts and someone asks whether the proposed design is consistent with the enterprise architecture. It happens when an examiner asks a question that the technology team cannot answer without convening a meeting. Between those episodes, architecture governance is dormant.

AI does not tolerate episodic governance. AI requires architecture to be a continuous capability with standing authority, operational cadence, and real decision rights. Banks that cannot make this shift will find that their AI initiatives stall not because the technology is wrong but because the organization cannot govern it fast enough.

The Architecture Review Board with Teeth

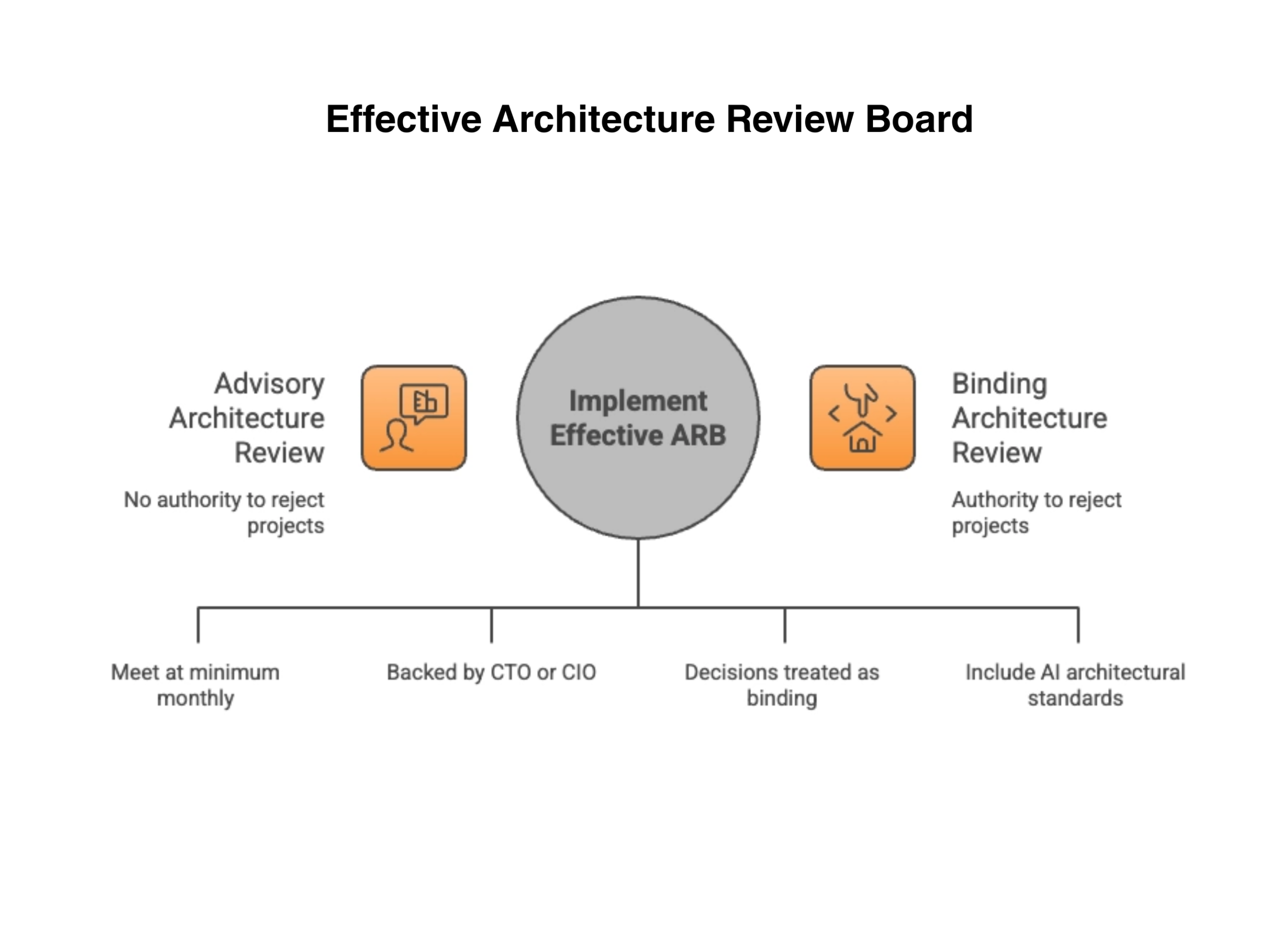

Most banks have an Architecture Review Board. Most of those boards are advisory. They review proposed designs, offer recommendations, and publish guidelines. They do not have the authority to reject projects that violate architectural principles. The result is predictable: project teams acknowledge the guidance, agree in principle, and then build whatever the project timeline demands. Architectural debt accumulates not because it is unrecognized but because the governance body cannot prevent it.

An effective Architecture Review Board meets on a regular cadence — at minimum monthly, and biweekly for banks with active AI programs. It has authority to reject projects that violate architectural principles. Not recommend against. Reject. This authority must be backed by executive sponsorship, typically the CTO or CIO, and the board’s decisions must be treated as binding rather than advisory.

The board’s scope must include AI-specific architectural standards: does this initiative adopt the enterprise data ontology? Does it publish events to the shared event fabric? Does it expose APIs that meet the bank’s AI-readiness criteria? Does it include governance infrastructure — audit logging, lineage tracking, model versioning? If the answer to any of these is no, the project does not proceed until it does.

The most common objection we hear from bank executives is that they cannot afford a standing architecture function. The answer is that they cannot afford not to have one. Every project that bypasses architectural standards because the governance body was dormant creates debt that costs three to five times more to remediate than it would have cost to build correctly. A standing architecture function is not a cost center. It is the cheapest form of rework prevention the bank will ever invest in.

Published Architectural Standards

The Architecture Review Board can only enforce standards that exist. Most banks have architectural principles — high-level statements about modularity, reuse, and scalability. Few banks have architectural standards — specific, testable requirements that every new initiative must demonstrate compliance with.

The difference matters. A principle says the bank values event-driven architecture. A standard says every new service must publish domain events to the enterprise event fabric using the approved schema registry, with events conforming to the enterprise ontology, within thirty days of production deployment. Principles are aspirational. Standards are enforceable.

Banks that invest the effort to translate principles into standards create a framework that project teams can comply with without consulting the architecture board on every decision. The standards become self-service guardrails rather than approval bottlenecks. The board’s role shifts from reviewing every project to auditing compliance and handling exceptions.

Technical Debt as a First-Class Concern

Every bank carries technical debt. Most banks manage it reactively — fixing things when they break or when the cost of not fixing them becomes unbearable. AI makes technical debt more expensive because every piece of debt is a piece of architecture that AI cannot operate on. A legacy service without APIs is a domain the AI cannot reach. A data store without event publishing is a source of intelligence the AI cannot observe.

A continuous technical debt register, reviewed and prioritized alongside the product backlog, transforms debt from a background concern into a visible investment decision. When the architecture team can show that retiring a specific piece of technical debt will unlock a specific AI capability — automated fraud detection for the lending domain, for example — the debt remediation earns its place in the roadmap on business value, not just technical virtue.

The first meeting of a technical debt review does not need to be comprehensive. Start with the five legacy systems that the AI team has already identified as blockers. Document the debt, estimate the remediation cost, and map each item to the AI capability it unlocks. That single exercise creates the business case for every technical debt conversation that follows.

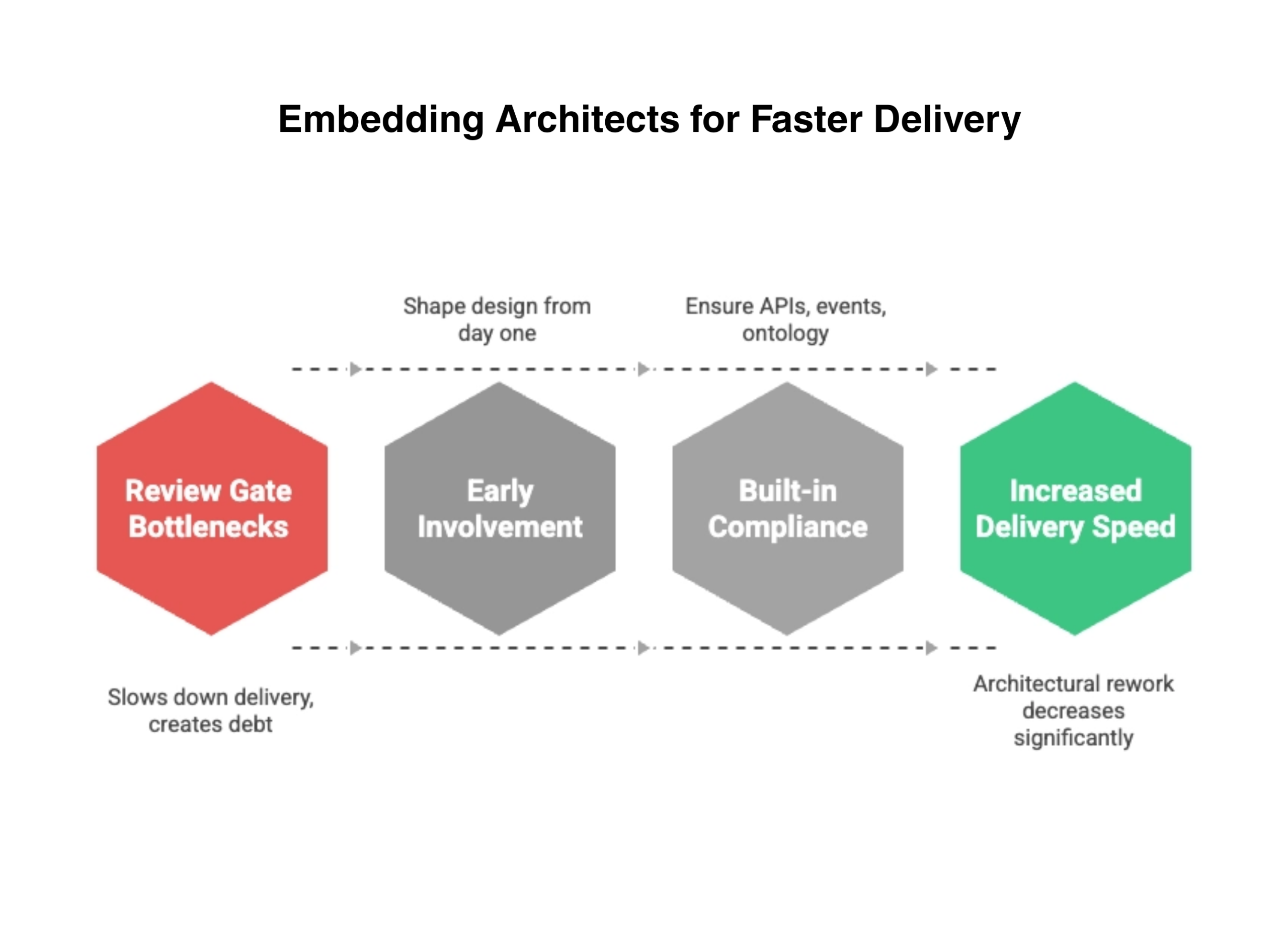

Embedded Architecture, Not Review Gates

The operating model fails if the architecture function sits outside delivery teams as a review gate that projects must pass through. Review gates create bottlenecks. Bottlenecks create pressure to bypass the gate. And bypasses create the architectural debt the gate was designed to prevent.

The alternative is embedding architects in delivery teams. An architect who is part of the project team from day one shapes the design as it emerges rather than reviewing it after the fact. Architectural compliance is built in rather than inspected in. The architect ensures that APIs are designed correctly, that events are published, that the ontology is adopted, and that governance infrastructure is included — all as the system is being built.

In the engagements where we have helped banks establish this model, we have seen a consistent pattern: delivery speed increases because architectural rework decreases. Projects that would have failed the architecture review and returned for redesign instead get it right the first time. The architecture function stops being a brake and starts being an accelerator.

The Speed Differential

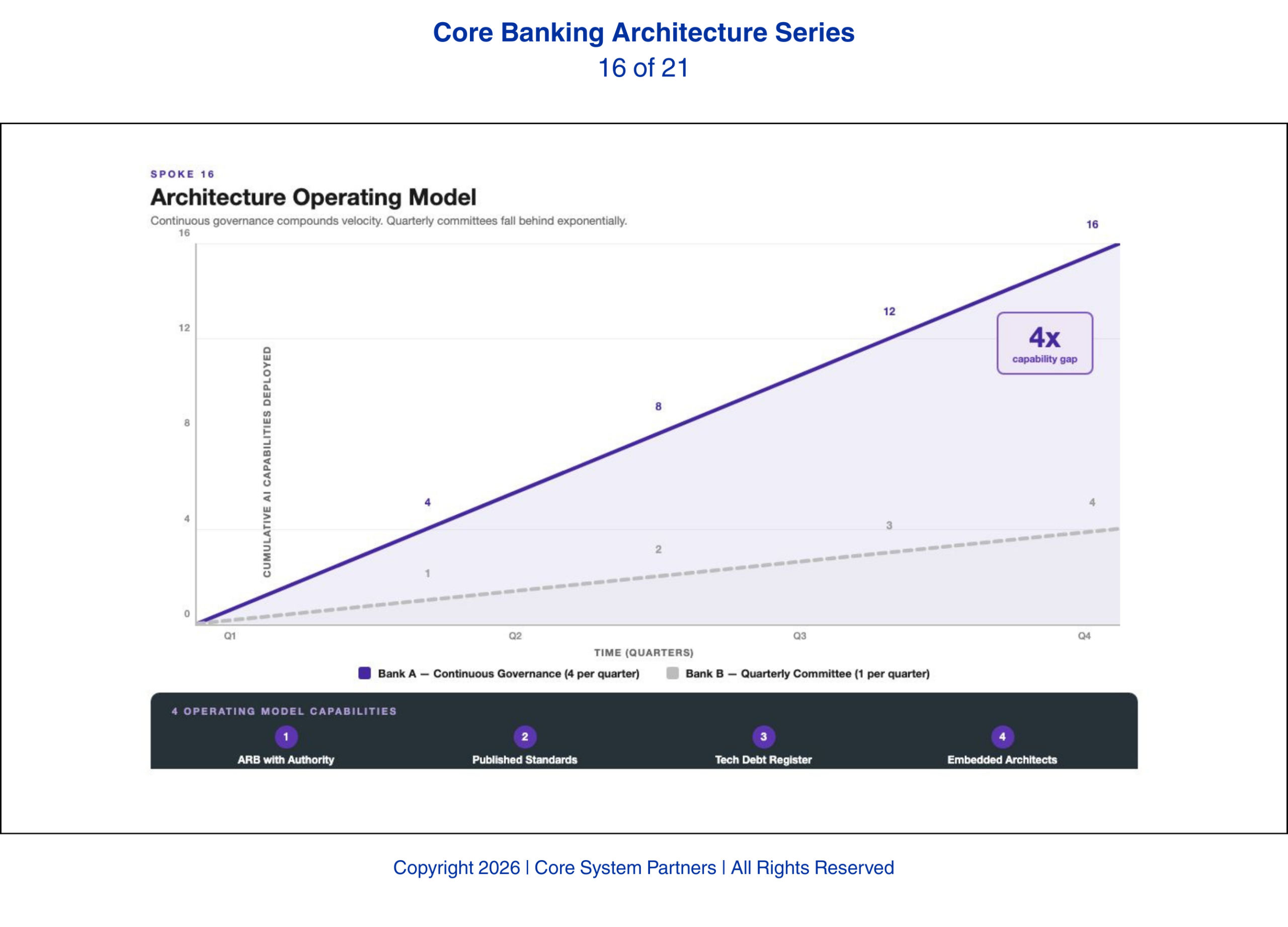

We have watched this play out across institutions of every size. The banks that have built this operating model — standing review boards with authority, published standards, continuous debt management, embedded architects — are deploying AI features in weeks. The banks still running architecture as a committee that meets quarterly are taking months just to get approval to start.

That speed differential compounds. A bank that deploys four AI capabilities per quarter while a competitor deploys one will have a sixteen-capability lead within a year. The capabilities build on each other — each new deployment extends the AI surface, generates training data, and reveals the next opportunity. The banks that invest in this operating model now are building a speed advantage that compounds with every quarter.

A bank that deploys four AI capabilities per quarter while a competitor deploys one will have a sixteen-capability lead within a year. Speed is not just a competitive advantage. It is a compounding one.

What Comes Next

The operating model provides the organizational structure. But structure requires people — and the talent required to build and operate an AI-ready bank is genuinely different from what most banks have today. The next article examines the new banking talent stack: the roles that did not exist five years ago and the capability-building strategies that banks must pursue because the hiring market alone cannot supply what they need.

Where to Start

If your bank’s architecture governance is episodic — convened when something breaks or when a project happens to request a review — the operating model gap is already affecting your AI capability. The question is whether you close it deliberately or discover it through stalled initiatives.

The CSP Transformation Readiness Scorecard evaluates your architecture operating model against the four capabilities outlined in this article: ARB authority, published standards, technical debt visibility, and embedded architecture practice. It identifies where the operating model supports continuous AI delivery and where episodic governance is creating bottlenecks, in under an hour, with clear prioritization of where to act first.

The speed differential described in this article — four AI capabilities per quarter versus one — is not a technology gap. It is an operating model gap. And it is recoverable.

If your institution is ready to move from awareness to action, visit coresystempartners.com/contact to start the conversation.

Next article in the series: The New Banking Talent Stack: Architects, Data Engineers, Model Ops, and AI Risk

Return to: Why AI makes modern core banking architecture non-negotiable

#CoreBankingTransformation #CoreBankingArchitecture